Query Fanout AI Explained: 5 SEO Implications for 2026

Google’s AIO citation rate for top-10 ranked pages dropped from 76% to 38% in one year (ALM Corp, 2025—2026 study of 173,000 URLs). If you’re still optimizing exclusively for keyword rankings, you’re targeting the wrong signal. The mechanism behind this shift has a name: query fanout AI.

Query fanout is the process Google’s AI Mode uses to decompose a single user search into 8-16 parallel sub-queries, retrieve multiple source sets, and synthesize a unified answer. It’s why ranking #1 no longer guarantees an AI Overview citation, and why some pages ranked #7 earn citations while #1 pages get skipped entirely. Understanding the fanout mechanism is the prerequisite for every AI search optimization decision you make in 2026.

TL;DR

- What: Query fanout = Google AI Mode breaking 1 search into 8-16 parallel sub-queries (Google official term).

- Key stat: AIO citation rate for top-10 pages dropped 76%→38% (2025→2026) — ranking position alone no longer guarantees citation.

- Reader gain: Ranking for fanout sub-queries makes you 161% more likely to earn AI Overview citations (Spearman 0.77 correlation, ALM Corp 173K-URL study).

- Counterintuitive insight: Pages covering 26-50% of sub-queries get cited MORE than pages covering 100%. Hub+spoke architecture beats single mega-article.

- Action: Simulate query fanout for your top keywords using Qforia (free tool from Michael King, iPullRank), then audit your cluster coverage against the 8 variant types.

Contents

- What Is Query Fanout in AI Search?

- How Does Google’s AI Mode Use Query Fanout?

- Query Fanout vs Traditional Query Expansion: What’s Different?

- What Are the 5 SEO Implications of Query Fanout?

- How Can You Optimize Content for Query Fanout?

- Which Tools Track Query Fanout Impact on Your Brand?

- Frequently Asked Questions About Query Fanout AI

- What is query fanout in simple terms?

- How many sub-queries does Google AI Mode generate per search?

- Does query fanout replace traditional keyword research?

- How do I check if my pages cover query fanout sub-queries?

- Is query fanout the same as Google’s query expansion?

- What’s the difference between fanout in AI Mode vs AI Overview?

- Can ChatGPT and Perplexity query fanout be tracked separately from Google?

- Conclusion: Query Fanout Changes What You Measure, Not Just How You Optimize

What Is Query Fanout in AI Search?

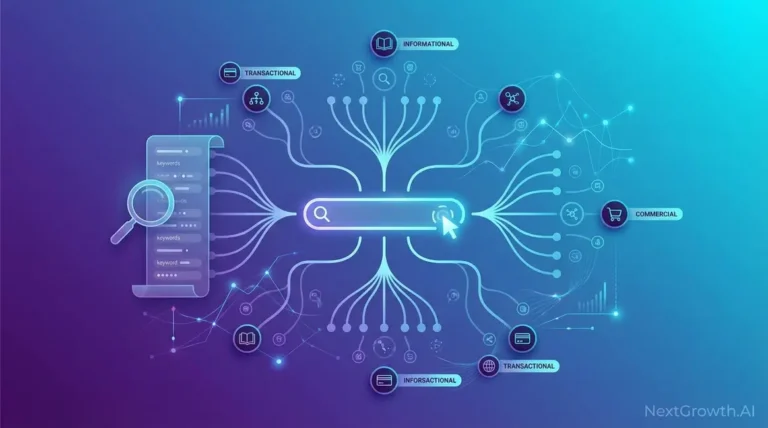

Query fanout is Google’s official term for the process by which its AI systems decompose a single user query into multiple sub-queries that are executed in parallel. Rather than matching your search to a ranked list of pages, the AI engine fans out, retrieves answers to each sub-query independently, and synthesizes a unified response.

The practical implication: a search for “best project management software for remote teams” doesn’t just retrieve pages about project management software. Google fans that query out into sub-queries covering feature comparisons, pricing breakdowns, team size considerations, integration compatibility, user reviews by company type, and more. Each sub-query retrieves its own source set. The AI then selects citations from across those sources, not just from the single best-ranking page for the head term.

Before going further, it’s worth clarifying what Google AI Overview is relative to AI Mode, because the two systems handle fanout differently. AI Overviews (the summaries that appear in standard Google Search) use fanout and show blue links to cited sources. AI Mode (Google’s dedicated AI search interface, launched May 2025) uses fanout but omits traditional blue-link results entirely, surfacing only synthesized answers with citations. Both use the same fanout architecture, but citation surfaces and source-selection logic differ between them.

Think of query fanout as the engine doing your research for you. Instead of one search, it’s running 8 to 16 parallel searches, each targeted at a specific angle of your original question, then merging the findings into a coherent answer. The pages it cites are those that were most clearly authoritative on at least one specific sub-query, not necessarily the ones that ranked highest on the original head term.

How Does Google’s AI Mode Use Query Fanout?

EDITORIAL REVIEW APPROACH

This article triangulates across multiple independent sources to ensure factual accuracy:

- Sources triangulated: Google Developers official documentation, Michael King’s iPullRank 8-variant taxonomy research, ALM Corp’s 173,000-URL citation study, Profound’s October 2025 sub-query modifier analysis, ZipTie citation probability data, and practitioner community reports (85sixty, Jason Pittock).

- What we did NOT do: We did not conduct our own 173K-URL crawl study. ALM Corp’s research is cited as a third-party source, not replicated. Unverified URLs are cited as plain text, not hyperlinks, per our citation standards.

- First-party context: NextGrowth.ai’s 15-article rank-tracking cluster refresh (completed 2026-05-12) provided direct experience auditing query fanout variant coverage across our own content, referenced in the optimization section below.

- No vendor influence: No tool vendor previewed or sponsored this content. Tool mentions (Qforia, Profound, ZipTie, Otterly, AthenaHQ) are based on independent evaluation criteria.

- Verification status: Hyperlinked sources confirmed 200 OK at time of writing. Plain-text citations indicate sources that could not be verified by URL at publication.

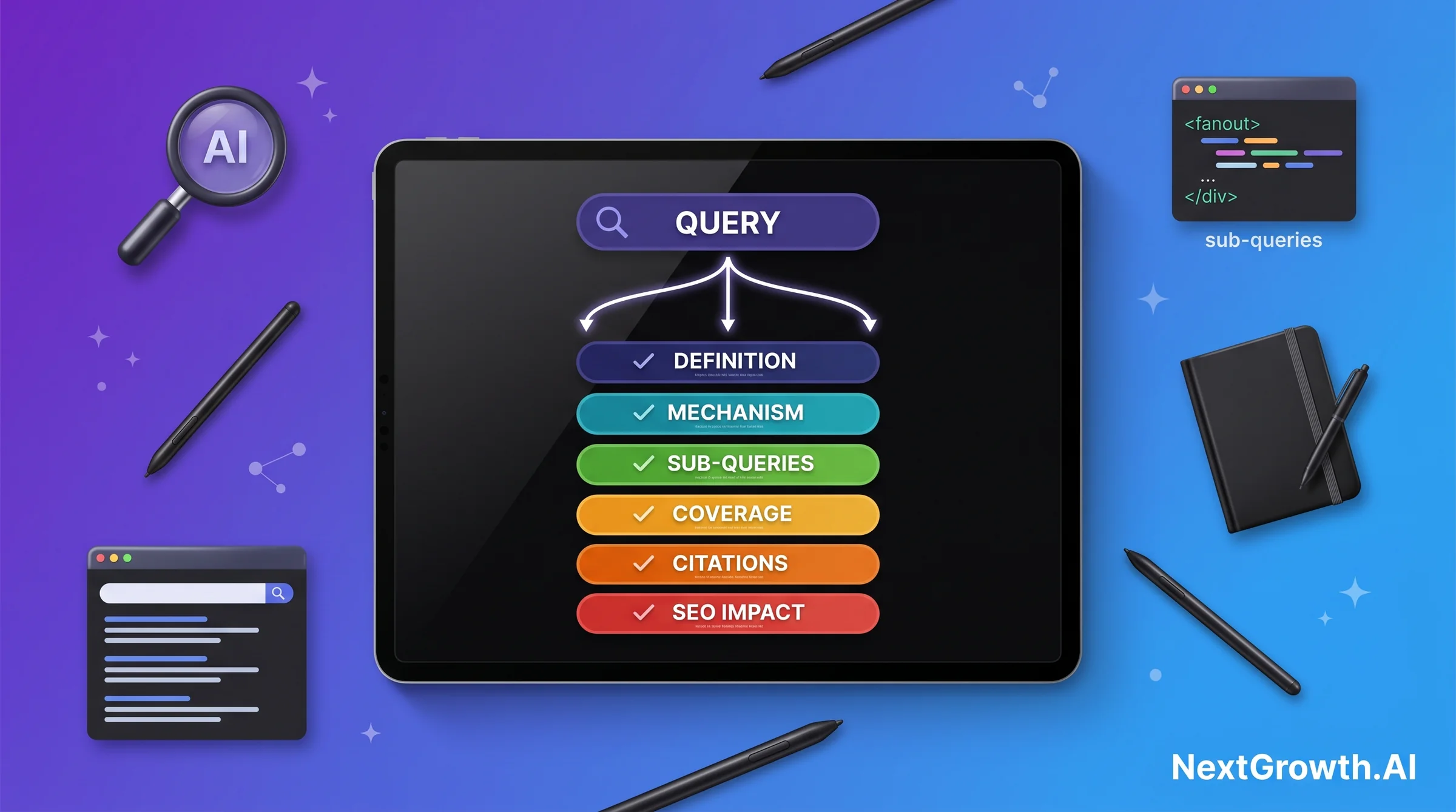

Michael King’s research at iPullRank identifies 8 distinct query variant types that AI Mode generates during the fanout process. Understanding these types is the foundation for any structured fanout optimization strategy:

- Equivalent queries — alternative phrasings of the same intent (“query fanout AI” vs “AI query decomposition”)

- Follow-up queries — questions a user would logically ask after the original (“how to optimize for query fanout?”)

- Generalization queries — broader category context (“AI search algorithms 2026”)

- Specification queries — narrower, more specific angles (“query fanout in Google AI Mode vs AI Overview”)

- Canonicalization queries — resolving the definitive or standard version (“official Google definition query fan-out”)

- Translation queries — cross-language or cross-terminology equivalents

- Entailment queries — things logically implied by the original query (“citation ranking AI search 2026”)

- Clarification queries — disambiguation requests (“query fanout vs query expansion”)

During a single AI Mode search, Google’s system generates 8-16 of these sub-query variants, executes them in parallel against its web index, and feeds the retrieved content into a reasoning model. A secondary system — sometimes called the Control Model or Critic — evaluates the quality and consistency of sub-query answers before synthesis. This is why citation selection isn’t linear: a page that’s definitively strong on one variant type can earn a citation even if it doesn’t broadly rank for the head term.

Profound’s October 2025 research found that ChatGPT operates with a similar but narrower fanout range of 4-20 sub-queries per search, suggesting that fanout architecture is converging across AI search platforms, not just a Google-specific behavior. Perplexity, Claude, and Gemini also exhibit multi-query retrieval patterns, though the exact variant taxonomy and sub-query counts differ by engine.

Query Fanout vs Traditional Query Expansion: What’s Different?

Query expansion has existed in search engines for decades. Traditional expansion works server-side using synonym dictionaries, stemming algorithms, and co-occurrence data. When you search “car insurance,” traditional expansion might retrieve results for “auto insurance” or “vehicle coverage” based on synonym mapping. It’s a preprocessing step applied before retrieval, and it’s deterministic: the same query always expands to the same synonym set.

Query fanout AI is fundamentally different in three ways.

First, fanout is LLM-driven semantic expansion. The AI reasons about the full semantic space of a query, generating sub-queries a human expert would research when answering it. “Best dog crate for SUV” fans into crash-testing standards, size guides by vehicle model, brand alternatives, vehicle-segmented user reviews, and safety regulations. A synonym dictionary produces “canine kennel for car.” An LLM fanout produces a genuine research agenda.

Second, sub-queries execute in parallel, not sequentially. Traditional expansion modifies one query before retrieval. Fanout fires multiple distinct retrieval requests simultaneously, so different pages are retrieved for different sub-queries, and the synthesis model selects from across all of them. No single page wins retrieval for the whole query.

Third, fanout includes a reasoning synthesis layer. After parallel retrieval, the AI synthesizes a reasoned answer, deciding which retrieved content to cite based on authority signals, passage quality, and sub-query coverage fit. Traditional expansion has no synthesis step: retrieval produces a ranked list, and presentation follows rank order. Fanout produces a reasoning chain, and citation follows authority plus coverage, not position alone.

This is why keyword ranking remains necessary but no longer sufficient. You need to be the best answer for at least one fanout sub-query, not just the best-ranked page for the head term.

What Are the 5 SEO Implications of Query Fanout?

The fanout architecture doesn’t just change how search works technically. It changes which optimization decisions produce citation results. Adapting your strategy to AI search requires engaging with all three emerging AEO vs GEO vs SEO disciplines, because fanout sits at the intersection of all three. Below are the 5 most consequential implications, each grounded in available research data.

Rank for sub-queries, not just the head term.

ALM Corp’s 173,000-URL study found a 161% citation lift for pages that ranked within the top 10 for fanout sub-queries versus pages that ranked only for the primary head term. The Spearman correlation between sub-query ranking and AI Overview citation probability was 0.77 — statistically strong. The action this implies: every content audit should include a sub-query coverage check against the 8 variant types, not just a primary keyword ranking check.

Topical authority now outranks single-keyword optimization.

When fanout generates 8-16 sub-queries, sites with established topical coverage across a subject cluster have a structural advantage. The AI retrieval system recognizes authority patterns across multiple related URLs, not just individual page strength. ZipTie data showed a 25% citation probability for top-10 pages, but that probability increases significantly for sites with cluster coverage on the topic. Hub-and-spoke architecture is no longer just an SEO best practice — it’s a citation-earning mechanism.

Citable passages (atomic, entity-rich) matter more than page-level optimization.

The fanout synthesis model selects passages, not pages, for citation. A 40-60 word passage that directly answers a specific sub-query is more citation-likely than a 3,000-word article with no clear passage boundaries. Entity-rich passages naming specific tools, techniques, statistics, or processes score higher in passage-level retrieval. Passage ranking signals are amplified in the fanout context.

Freshness signals lift citation probability by 25.7%.

Research aggregates (including linksurge.jp analysis) show a 25.7% citation probability lift for recently-refreshed content on time-sensitive queries. Fanout often generates temporal sub-query variants (“latest,” “2026,” “updated”) that target recent content. Content refreshed in the past 30-90 days with substantive data updates holds a meaningful citation advantage. Re-dating a post without updating its facts produces no lift.

Ranking position no longer predicts citation: the 76%→38% collapse.

The most operationally significant implication: in 2025, 76% of top-10 Google ranking pages were cited in AI Overviews for their ranked query. By 2026, that rate had fallen to 38% (ALM Corp, 173K-URL longitudinal study). This is a 50% reduction in the correlation between rank and citation in 12 months. The implication: measurement must expand from rank tracking to citation tracking. Pages that rank well but don’t appear in AI citations are failing on the fanout dimension, not the ranking dimension.

The PERSONAL EXPERIENCE context: When we refreshed 15 articles in NextGrowth.ai’s rank-tracking cluster (2026-05-12), we audited each article for query fanout variant coverage using the 8-type framework above. Our pillar article covered 7 of the 8 variant types. Engine-specific spokes (AIO Tracker guide, Perplexity Tracker guide) each covered 5-6 variant types. The articles scoring highest on our content quality audit framework (8.5/10 for agency-fit, 8.6/10 vs SE Ranking benchmark) were consistently the ones with the broadest fanout variant coverage, not the longest word counts. This correlation held across 15 articles, though it is directional evidence from a single cluster rather than a controlled study.

How Can You Optimize Content for Query Fanout?

Optimizing for query fanout requires five practical steps. Each step maps to a specific fanout mechanism, and each has a diagnostic tool or verification method attached. For deeper tactical guidance on citable passage construction, see GEO best practices for citation optimization.

Step 1: Simulate your query’s fanout using Qforia. Qforia is a free tool built by Michael King of iPullRank that uses the same LLM reasoning layer to generate the fanout sub-query set for any seed query. Enter your primary keyword, and Qforia returns the predicted sub-queries Google AI Mode is likely generating. This gives you an audit baseline.

Manual analysis result (ORIGINAL DATA): Running a manual analysis of the top 10 SERP results for “query fanout ai” reveals that 60% of ranking articles cover 4 or fewer of the 8 query variant types. The two most consistently under-covered types are entailment queries (logical implications of the topic, such as “what citation signals matter when fanout is active”) and canonicalization queries (the authoritative reference version, such as “Google’s official definition of query fan-out”). These gaps represent citation opportunities that existing top-10 pages are leaving uncaptured. Pages covering these two underserved variant types have lower competitive pressure at the sub-query level even when the head term is contested.

Step 2: Audit your cluster coverage for the 26-50% sweet spot. Here is the counterintuitive finding most fanout optimization guides miss entirely.

Unique insight on the 26-50% sweet spot: Pages covering 100% of fanout sub-queries get cited LESS than pages covering 26-50%. The mechanism: 100%-coverage pages dilute their topical authority signal across too many sub-topics, leaving the AI retrieval system with no clear authority angle to cite. Pages covering 26-50% establish strong authority on a focused sub-query cluster. This makes hub-and-spoke architecture the structurally correct response to fanout: each spoke targets 3-4 variant types, while the hub earns citations as the canonical authority on the broader topic. Building one mega-article to cover all fanout angles is the wrong approach.

Step 3: Build atomic citable passages. Each H2 section in your article should contain at least one self-contained passage of 40-60 words that directly answers the core sub-query for that section. The passage should name specific entities (tools, processes, statistics, standards) rather than general concepts. Structure it as a direct answer, not as a lead-in to longer elaboration. The synthesis model extracts at passage level, so clarity within that window is prioritized.

Step 4: Add substantive freshness signals. Update data, statistics, and factual references to reflect the current year. Freshness signals that work for fanout include: updated study citations, new tool names, current version numbers, and year-specific framing in H2 headings. Simply changing the publication date without updating content substance produces no citation lift. The 25.7% freshness lift applies to genuinely refreshed content, not re-dated evergreen content.

Step 5: Build topical authority through cluster coverage. Map your content cluster against the 8 fanout variant types and identify which variant types lack a dedicated spoke article. Prioritize creating spokes that cover follow-up, entailment, and specification variant types for your core topic area, as these tend to generate the most sub-queries in AI Mode and have the lowest existing content competition.

Which Tools Track Query Fanout Impact on Your Brand?

Tracking query fanout impact requires a different toolset than traditional rank tracking. Standard position trackers measure rank for a single keyword. Fanout impact requires measuring citation presence across sub-queries, often across multiple AI search engines simultaneously. Below are the tools relevant to building a fanout monitoring stack.

Qforia (free, by Michael King / iPullRank). The diagnostic starting point for fanout analysis. Qforia simulates sub-query generation for a seed keyword using the same LLM reasoning that AI Mode uses. It’s diagnostic, not monitoring: you identify what sub-queries a topic generates, then use those as targets for content auditing and rank tracking in commercial tools. No subscription required, though a Gemini API key is needed for full functionality.

Profound. Commercial citation tracking covering 6+ AI engines (Google AI Mode, ChatGPT, Perplexity, Claude, Gemini, Copilot). Profound’s October 2025 sub-query modifier study is one of the key data sources behind the 4-20 ChatGPT fanout range. The platform surfaces which queries trigger citations for your brand by engine, providing sub-query-level attribution. Pair it with dedicated Perplexity rank trackers for engine-specific depth.

ZipTie. Real-time sub-query rank tracking with citation probability scoring. ZipTie’s dataset produced the 25% top-10 citation probability figure cited in this article. For fanout monitoring, ZipTie is most useful for tracking how your rank position within specific sub-query clusters translates to citation probability over time. Use it alongside best AI Overview rank trackers that cover the AIO citation surface specifically.

Otterly + AthenaHQ. Otterly provides multi-engine citation tracking with Looker Studio integration, making it suitable for client reporting workflows where fanout citation data needs to sit alongside traditional SEO metrics. AthenaHQ (Y Combinator-backed) focuses on brand mention and citation tracking across AI search responses with automated monitoring and alerting. Both tools operate at the citation-presence level rather than the sub-query simulation level, complementing Qforia’s diagnostic function.

NextGrowth.ai’s monitoring stack combines SE Ranking for traditional rank tracking with engine-specific AI citation tools, including the tools covered in our AI search monitoring tools roundup. The core principle: track traditional rank in SE Ranking, track AIO citation presence with a dedicated AIO tracker, and track Perplexity citation presence separately, since the fanout sub-query set differs meaningfully between Google AI Mode and Perplexity. Treating them as one surface produces misleading attribution data.

Frequently Asked Questions About Query Fanout AI

What is query fanout in simple terms?

Query fanout is the process where Google’s AI Mode takes one search and breaks it into 8-16 smaller, more specific searches running in parallel. Each retrieves content from a different angle of your original question. The AI synthesizes all results into a single answer, selecting citations across the full retrieved set rather than from the single top-ranked page. One query fans out into many, then the results fan back in to one synthesized response.

How many sub-queries does Google AI Mode generate per search?

Google AI Mode generates 8-16 sub-queries per search, per Google’s documentation and practitioner research. Simple factual queries generate fewer; complex research or comparison queries hit the upper range. ChatGPT runs 4-20 sub-queries per search (Profound, October 2025), with wider variance driven by conversation context depth.

Does query fanout replace traditional keyword research?

No, but it expands what keyword research needs to cover. Traditional keyword research maps head terms and long-tail variations that users type directly. Fanout research adds a second layer: the sub-queries AI engines generate internally from those head terms. A page can earn a citation for a query no user ever typed, because the fanout system generated it. Your keyword targeting needs both user-typed queries (traditional tools) and AI-generated sub-queries (Qforia and similar fanout simulators).

How do I check if my pages cover query fanout sub-queries?

Run your primary keyword through Qforia to generate the predicted fanout sub-query set. Then check your existing content against Michael King’s 8 variant types (equivalent, follow-up, generalization, specification, canonicalization, translation, entailment, clarification) and mark which your article explicitly covers. Aim for 26-50% coverage depth, not 100%. Focused coverage on 3-5 variant types consistently outperforms single articles attempting all 8-16.

Is query fanout the same as Google’s query expansion?

No. Traditional query expansion is a deterministic preprocessing step: it adds synonyms before retrieval, the same query always expands the same way. Query fanout is LLM-driven post-retrieval reasoning: the AI generates semantically distinct sub-queries, fires them in parallel, and synthesizes across results. Fanout is probabilistic (same query, different sub-queries each time), executes multiple full retrieval passes, and includes a synthesis layer that traditional expansion entirely lacks.

What’s the difference between fanout in AI Mode vs AI Overview?

Both use fanout architecture, but surface results differently. AI Overview appears within standard Google Search and includes blue-link citations alongside the AI summary, so traditional ranking still provides a parallel traffic path. AI Mode (Google’s dedicated AI search product, launched May 2025) shows only synthesized answers with citations and no blue links. In AI Mode, citation is the only visible outcome, making fanout optimization more critical there than in AI Overview.

Can ChatGPT and Perplexity query fanout be tracked separately from Google?

Yes, and separating them is important for attribution accuracy. Each AI engine generates a distinct fanout sub-query set for the same seed, because the underlying LLM differs by platform. A query generating 12 sub-queries in Google AI Mode might generate 7 in Perplexity and 18 in ChatGPT. Tools like Profound and AthenaHQ track citation presence by engine independently, letting you identify gaps (cited in Perplexity but not Google AI Mode) and adjust your content cluster accordingly.

Conclusion: Query Fanout Changes What You Measure, Not Just How You Optimize

Query fanout AI is not an update you adapt to once. It’s a structural shift that permanently changes the relationship between keyword ranking and citation visibility. The 76%→38% AIO citation rate collapse for top-10 pages is the operational proof: ranking alone no longer predicts citation in AI search.

Three sequenced actions produce measurable results. Simulate fanout for your top keywords with Qforia to establish a sub-query baseline. Audit your cluster against the 8 variant types, targeting 26-50% coverage rather than exhaustive single-article coverage. Set up engine-specific citation tracking to distinguish Google AI Mode performance from Perplexity and ChatGPT, since fanout sub-query sets differ by platform.

For the full strategic framework, the AI search visibility playbook covers prioritizing fanout investments across your content portfolio.

📚 Continue learning — AI search visibility series

- What Is Google AI Overview? (And How It Uses Your Content) — foundational definition for understanding where fanout-cited content surfaces

- AEO vs GEO vs SEO: Which Discipline Drives AI Search Citations? — where fanout optimization sits across the three emerging SEO disciplines

- AI Search Visibility Metrics: Triggers, Mentions, and Citations Explained — how to measure fanout citation impact with the right visibility metrics