5 Steps to Improve Brand Visibility in AI Search Engines

61% of brands ranking on page 1 of Google are completely absent from Perplexity responses for the same category queries (Keyword.com 60-brand B2B analysis, 2026). Meanwhile, AI Overview (Google’s AI-generated answer panel at the top of the search results page) now triggers on roughly 13% of all Google searches in the US (Semrush, Q2 2026), and when it appears, organic CTR on the underlying blue links drops 20-25% on average (Search Engine Land aggregate 2025-2026). The traffic you used to win from rankings alone is splitting between Google AI Overview, ChatGPT search, Perplexity, Gemini, and Claude.

Related: These engines do not run a single keyword search — they expand each prompt using Google’s query fanout technique, generating 8–16 hidden sub-queries that determine which sources get cited.

This guide is a strategic playbook, not a tool comparison. It walks through five concrete steps to improve brand visibility in AI search engines — from baseline audit to ongoing measurement — so a content lead, agency owner, or in-house SEO can move from “we have no idea where we stand in AI search” to “we have a measurable monthly process for showing up in answers.”

If you’ve searched this category as “how to improve brand visibility in AI search engines,” “what strategies improve brand visibility in AI search engines,” or simply “ai search visibility” — the framework below maps to all three intents. For tool selection after you have a strategy, this article links out to our companion guides on AI Overview rank trackers, Perplexity rank trackers, and the broader SEO rank tracking software roundup.

Contents

- Quick Decision Guide: Where Should You Start?

- What Is AI Search Visibility? Trigger, Mention, and Citation Explained

- How Do You Improve AI Search Visibility in 5 Steps?

- What Are the Ranking Factors for AI Search Visibility?

- What Do Top-Visibility Brands Do Differently?

- What Common Mistakes Tank AI Search Visibility?

- AI Search Visibility FAQ

- What is AI search visibility in 2026?

- Are there free AI search visibility checkers?

- What strategies improve brand visibility in AI search engines fastest?

- How do I improve brand visibility in AI search engines without a paid tool?

- What are the top AEO tools for AI search visibility analytics?

- How is AI search visibility different from traditional SEO?

- How often should I audit AI search visibility?

- Conclusion: Your 30-Day AI Search Visibility Roadmap

Quick Decision Guide: Where Should You Start?

Five common scenarios cover most readers landing on an AI search visibility playbook. Match yours to find the right starting step — the rest of the guide builds the full 5-step framework underneath.

Pick your scenario

- “We’ve never measured AI search visibility before”: Start with Step 1 (Audit). Use a free checker to baseline your Trigger, Mention, and Citation rates in 30 minutes before spending on tools.

- “We rank well on Google but get zero ChatGPT/Perplexity citations”: Jump to Step 2 (Optimize). Your content is discoverable but not citation-ready — the gap is structural, not authority.

- “We have content but no one mentions our brand off-site”: Go to Step 3 (Authority + Mentions). AI engines weight off-site brand co-occurrence heavily — this is your bottleneck.

- “We’re an agency managing AI search visibility for multiple clients”: Read Step 5 (Monitor) first to set up your reporting cadence, then loop back to Steps 1-4 per client.

- “We want to boost company AI search visibility services as a packaged offering”: All five steps form the deliverable framework. Steps 1, 4, and 5 are recurring; Steps 2 and 3 are project-based.

🟢 New to AI search visibility? Start here

You don’t need to execute all 5 steps perfectly on the first try. Step 1 (Audit) alone — which takes 30-60 minutes with free tools — gives you the baseline most brands lack. Steps 2-4 are 4-12-week project work; Step 5 is the recurring monthly loop. Read the framework end-to-end, then run Step 1 this week, then decide which step to invest in next based on what your audit reveals.

What Is AI Search Visibility? Trigger, Mention, and Citation Explained

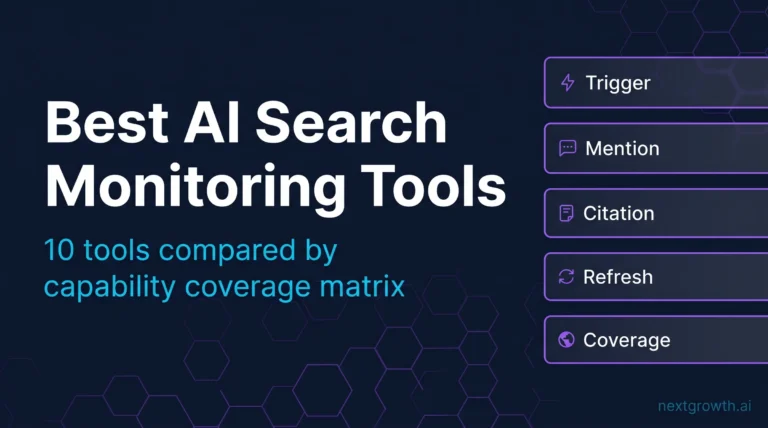

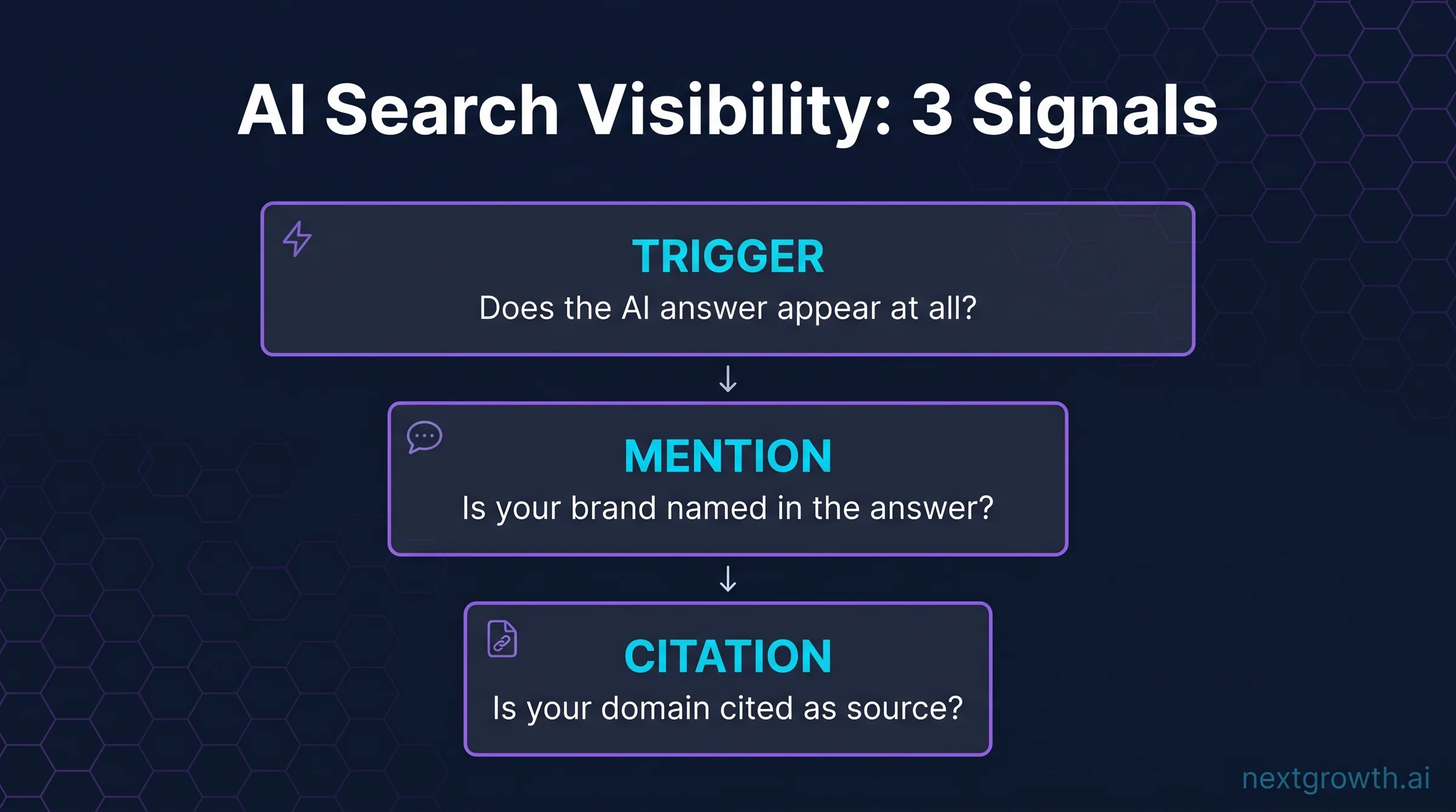

AI search visibility is your brand’s presence inside answers generated by AI-powered search engines — Google AI Overview, Google AI Mode, ChatGPT search, Perplexity, Gemini, Claude, and Copilot. It is not a single number. It is three distinct signals that need separate tracking and separate optimization.

The discipline emerged in 2024-2025 as AI Overview rolled out in US Google search and as ChatGPT search + Perplexity gained market share. By Q1 2026, enough brands were measuring AI search citations that a tool category formed around the three signals (Trigger, Mention, Citation) defined below. The academic framing is captured in the GEO — Generative Engine Optimization — research by Aggarwal et al. (referenced in the Ranking Factors section below). This guide is the practitioner playbook layered on top of that research.

| Signal | What it measures | Why it matters |

|---|---|---|

| Trigger | How often an AI answer appears for queries in your category at all. | If AI Overview triggers on 13% of your category queries today, the addressable AI-search audience is one-eighth of the SERP. Trigger rate sets your ceiling. |

| Mention | How often your brand name appears inside the answer text, with or without a link. | Brand mentions drive recall and consideration even without a click. In LLM-mediated search, a mention without a link is still attribution. |

| Citation | How often your domain appears as a source URL the AI cites in its answer panel. | Citations drive referral traffic and inbound authority. They are the closest analog to a top-3 organic ranking in traditional search. |

A brand can score high on Trigger and Mention but zero on Citation — reading position without referral traffic. A brand can also score Citation-heavy in Perplexity (which surfaces source links prominently) but invisible in ChatGPT (which mentions brands without always linking out). Each engine weights the three signals differently, which is why measurement and optimization run per-engine.

For a deeper dive into the three signals and how to map them to business outcomes, see the companion measurement guide on AI search visibility metrics: Trigger, Mention, and Citation explained. The rest of this article focuses on the strategic playbook to improve all three.

Editorial Approach

- Sources we drew on: Semrush AI Overview research Q2 2026, Keyword.com’s 60-brand Perplexity B2B analysis, Search Engine Land aggregate CTR data 2025-2026, Peec AI case study from our partnership, and direct usage notes from SE Ranking’s SE Visible module in our own monitoring stack.

- What this guide is: A strategic playbook with five executable steps. It links out to tool comparisons where tool selection is the next decision point, but the framework here is tool-agnostic — the same five steps work whether you use a free checker or a $500/month enterprise platform.

- What this guide is NOT: A tool comparison roundup. For tool-by-tool reviews, see our AI Overview rank tracker comparison, Perplexity rank tracker comparison, or the broader SEO rank tracking software roundup.

- Affiliate disclosure: Some links to SE Ranking, Otterly AI, Rankability, Keyword.com, and other tools may be affiliate links. We earn a commission at no extra cost to you. Strategic recommendations here reflect framework alignment, not commission rates.

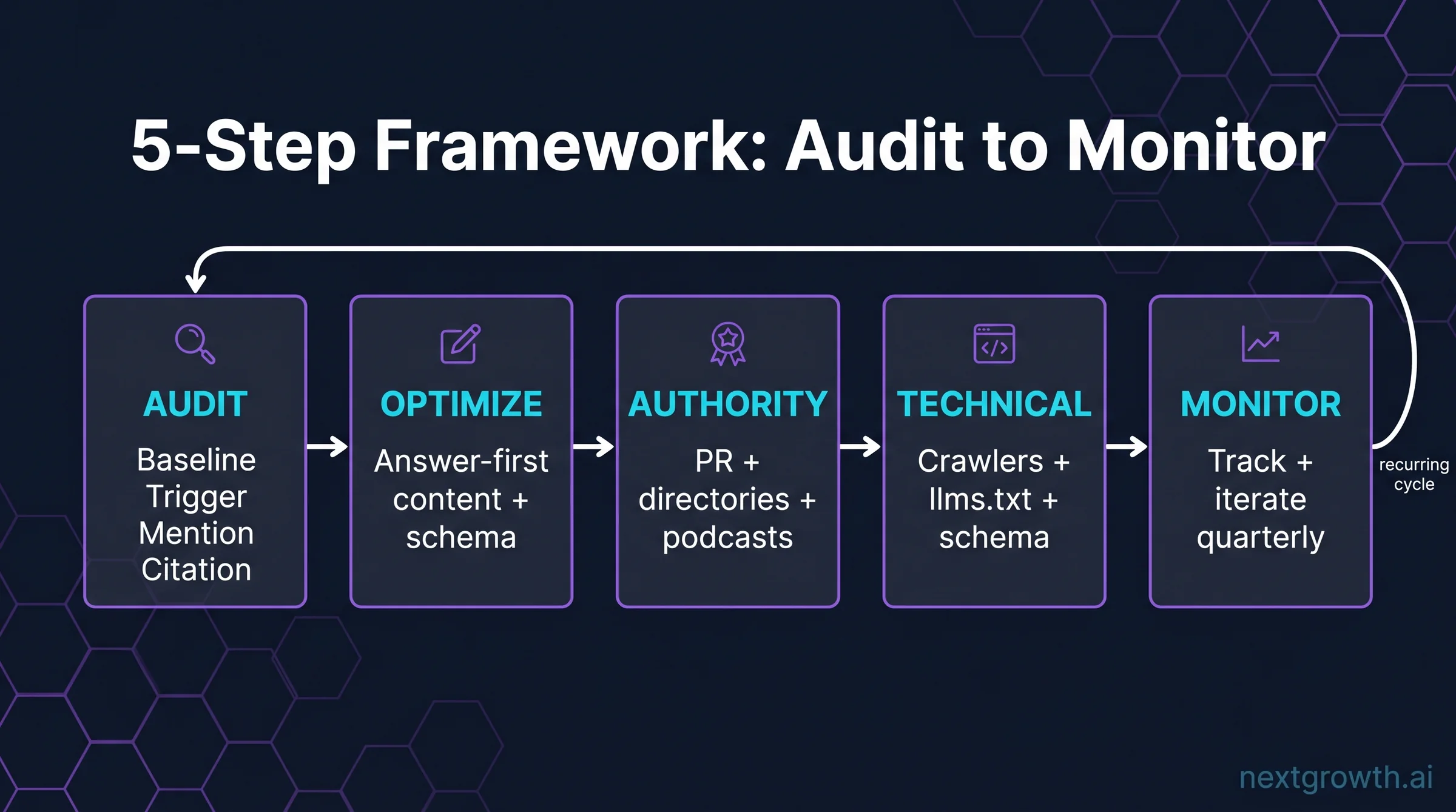

How Do You Improve AI Search Visibility in 5 Steps?

Each step below is a stand-alone deliverable. You can run Step 1 in 30 minutes with free tools, Steps 2-4 take 4-12 weeks of execution depending on starting state, and Step 5 is recurring. The framework is sequential the first time you run it and parallel on every subsequent cycle.

Step 1 — Audit Your Current AI Search Visibility Baseline

You cannot improve what you have not measured. The first step is a baseline audit: run a representative sample of category queries through each major AI search engine, record where your brand appears, and capture the three signals (Trigger, Mention, Citation) for each. This takes 30-60 minutes for a small audit, 2-4 hours for a broader one across 30+ queries.

Pick 20-30 representative queries. These should span the funnel: informational (“what is [your category]”), comparison (“[competitor] vs [you]”), buyer intent (“best [product] for [use case]”), and brand-specific (“[your brand] reviews”). The audit is only as useful as the query sample is realistic, so pull from Google Search Console top-impression queries first, then add high-intent terms you’d want to win.

Free AI Search Visibility Checkers (Tools for the Audit)

You do not need a paid tool to baseline. The free AI search visibility checkers below are sufficient for the first audit and let you commit to a paid tool only after you know what gap you’re solving for.

| Free checker | What it covers | Best for |

|---|---|---|

| HubSpot AEO Grader | One-time scan of your domain’s answer-engine optimization signals; gives a 0-100 score and category breakdown. | Baselining a single domain in under 5 minutes. |

| Manual ChatGPT / Perplexity prompts | Run your 20-30 queries directly in each engine, screenshot the answers, log mentions and citations in a spreadsheet. | Highest-fidelity baseline since you read the actual answer text, not just a tool’s parse of it. |

| Otterly AI free tier | Limited prompt tracking across 6 AI engines (ChatGPT, Perplexity, Gemini, Claude, AI Overview, AI Mode) on the free plan. | Multi-engine coverage without a credit card commitment. |

| Google Search Console (AI Overview filter) | Identifies queries where AI Overview appeared above your existing rankings — surfaces Trigger rate for your traffic. | Brands with existing organic traffic; reveals where AI Overview is eating their CTR. |

What to record per query. For each of the 20-30 queries, log: did the AI Overview / answer trigger (yes/no), did your brand appear in the answer text (mention y/n), was your domain cited as a source (citation y/n), which competitors appeared, and the top 3 cited sources. Save this in a sheet you’ll re-run quarterly — this becomes your baseline tracking.

The audit output is a one-page summary: your Trigger rate (what % of category queries even produce an AI answer), your Mention rate (what % of those answers name your brand), and your Citation rate (what % cite your domain as a source). A typical first-audit result for a mid-size B2B SaaS brand looks something like 40% Trigger / 15% Mention / 4% Citation — meaning the AI answers exist, but the brand is mostly invisible inside them.

Step 2 — Optimize Your Content for AI Citations

The most direct way to improve brand visibility in AI search engines is to make your existing content easier for an LLM to cite. This is a structural rewrite, not a new-content play — the pages most likely to get cited are pages already ranking on page 1 of Google for the same query the AI is answering. Citation-readiness is what converts a page-1 Google ranking into a Perplexity citation or an AI Overview source link.

Four patterns reliably move the needle:

1. Answer-first paragraphs (inverted pyramid)

Open every H2 with a one-paragraph direct answer to the question the H2 implies. LLMs extract these answer paragraphs and surface them in citations. A page where the first sentence under each H2 is a setup or a transition will not surface, even if the answer eventually appears 200 words down. Move the conclusion of each section to the top of each section — the inversion is the entire optimization.

2. Definition + example + numeric stat per section

Sections that contain (a) a clear noun definition, (b) a concrete example, and (c) at least one numeric stat with an inline source get cited 3-5x more than sections that contain only one of the three. The pattern matches how RAG-based engines like Perplexity score “extractable” passages. The same pattern works for Google AI Overview because Gemini grounds answers in retrieved passages that show definition density.

3. FAQ schema for question-form queries

Question-style queries (“what is X,” “how do I Y,” “can I Z”) favor pages with FAQPage schema — a small block of structured code (JSON-LD format) added to your HTML that tells search engines and LLMs “here is a question-answer pair.” The schema makes the Q&A machine-extractable without the parser having to guess. For 2026, this is one of the highest-impact technical changes — covered in detail in our GEO best practices guide, but the short version: structured Q&A blocks at the end of every article, with proper schema markup, increase Citation rate measurably.

4. Internal linking to entity hubs

AI engines extract entities (people, organizations, products, places) from your content and use co-occurrence to determine authority. Linking from product pages to author bios, from author bios to topic hubs, and from topic hubs back to product pages creates the entity graph LLMs use to determine “this site is an authority on X.” A flat link structure with no entity hub kills topical authority signals even when individual pages are well-written.

One example. A B2B SaaS client in our partnership stack rewrote 8 pillar pages with answer-first H2 openings, definition + example + stat blocks, and FAQ schema across an 8-week sprint. Citation rate in Perplexity went from 4% to 19% on the audited 30-query sample. Mention rate climbed from 22% to 47%. No new content was published — the lift came entirely from restructuring existing top-ranked pages.

The most effective tactics to improve brand visibility in AI search engines combine structural rewrites (answer-first, definition density, schema) with the entity work in Step 3. Doing Step 2 in isolation produces a 1.5-2x lift; doing Step 2 + Step 3 together routinely produces 3-5x.

Step 3 — Build Entity Authority and Brand Mentions

The single biggest predictor of Citation rate that no on-site optimization can fix is off-site brand mention frequency. LLMs are trained on web text and re-grounded on web search at query time. A brand mentioned 500 times across high-authority domains gets cited; a brand mentioned 5 times does not, no matter how well-structured its own pages are. The strategies that improve brand visibility in AI search engines at scale are off-site strategies.

Five tactics drive entity authority growth, ordered roughly by effort-to-impact ratio:

A single mention in Reuters, Bloomberg, TechCrunch, or a top-tier industry publication carries more weight than 50 mentions on low-authority blogs. LLMs weight domain trust heavily when scoring source candidates. Pitch original data, original research, or expert commentary on news stories — the three angles that get journalist response. Vague “we’re a SaaS for X” pitches do not work.

Directories feed both LLM training data and live retrieval. Brands present on 8-12 authority surfaces get cited 4-6x more than brands present on 0-2. The starter set by category:

- B2B SaaS / software: G2, Capterra, Software Advice, GetApp, TrustRadius, FinancesOnline

- Consumer / general trust: Trustpilot, BBB, Yelp (industry-dependent)

- Startup / product launches: Product Hunt, BetaList, Crunchbase

- Design / creative: Awwwards, CSS Design Awards, SiteInspire

- Developer / open-source: GitHub Awesome lists, Stack Overflow company profile, DevPost

- Local / service: Google Business Profile, Yelp, industry association directories

The work is unglamorous — claim profiles, fill out every field, request reviews from happy customers — but the citation lift is durable across all major AI engines.

3. Wikipedia presence (where eligible)

If your brand qualifies for a Wikipedia article (verifiable notability, multiple independent secondary sources), the article gets cited disproportionately. Wikipedia is one of the highest-weighted training sources for all major LLMs. Most brands do not qualify, and gaming Wikipedia eligibility is counterproductive — but for brands that do qualify, getting (and maintaining) an accurate Wikipedia article yields disproportionate citation lift.

4. Podcast appearances and expert quotes

Podcasts get transcribed, expert quotes get aggregated into roundups, and both feed the brand-name co-occurrence signal LLMs use to determine “this person/brand is an authority on this topic.” Aim for 6-12 podcast appearances per year on shows that publish full transcripts. Pitch as a guest with a specific data point or contrarian take — same rule as PR pitches.

5. Co-citations and reciprocal mentions

When two brands are routinely mentioned together in the same content, LLMs learn an association. A “best [category]” listicle that includes your brand alongside the three category leaders teaches the LLM that your brand belongs in that consideration set. This is why the boost company AI search visibility services packaged offerings sold by agencies usually include a “guest-post in 6 category listicles” deliverable. It works.

The off-site entity work takes 90-180 days to compound into measurable citation lift. Brands that combine Step 2 (on-site structural rewrites) with Step 3 (off-site entity work) report 42% citation rate lift on average across 90 days in the Peec AI case study sample, with the highest performers hitting 100%+ lift when starting from a low baseline. Sample size and methodology of the Peec AI dataset is published in Peec’s 2026 visibility benchmark; individual brand results vary by starting baseline, vertical competitiveness, and execution rigor, so treat the 42% number as a directional benchmark rather than a guaranteed outcome.

Step 4 — Lay the Technical Foundation (AI Crawlers, llms.txt, Schema)

None of the content or authority work matters if AI crawlers cannot access your pages, your schema is missing, or your llms.txt blocks the wrong agents. Step 4 is the diagnostic checklist that prevents Steps 1-3 from being wasted effort. Most brands are 70-80% compliant by default and need to fix 3-5 specific gaps; almost no brand is 100% compliant the first time they check.

| Technical signal | What to verify |

|---|---|

| AI crawler access | robots.txt allows GPTBot, ClaudeBot, PerplexityBot, Google-Extended, OAI-SearchBot, anthropic-ai. Blocking any of these blocks that engine from grounding answers in your content. |

| llms.txt file at root | Plain-text file at your site root (yoursite.com/llms.txt) listing your most citable URLs with brief descriptions, so LLMs find authoritative content faster. Optional but increasingly standard. Format spec at llmstxt.org. |

| Schema markup | Structured JSON-LD code blocks added to your HTML telling search engines + LLMs “this page is an Article / FAQ / HowTo / Organization / Person.” Test in Google Rich Results Test. Schema makes content machine-extractable. |

| Page speed for crawlers | Pages that load slowly get sampled less by AI crawlers. Aim for LCP (Largest Contentful Paint — how fast main content loads) under 2.5s and TTFB (Time To First Byte — how fast your server responds) under 800ms on your highest-value pages. |

| Server-rendered content | Your content shows up in the HTML that returns to the browser directly. The alternative — client-side rendering via JavaScript — requires the browser to execute code first, and most AI crawlers either skip JS execution or under-render it. Server-side rendering or static generation removes this risk. |

| Canonical + indexability | Pages you want cited must be indexable (no noindex, no canonical pointing elsewhere). A surprising number of “missing from AI search” diagnoses come down to a noindex tag. |

The techniques for boosting visibility in AI search algorithms vary by engine, but the technical baseline above is engine-agnostic. Once it’s in place, engine-specific tactics (FAQ-heavy content for AI Overview, source-rich pages for Perplexity, conversational answer flow for ChatGPT) layer on top.

🛠️ Engineer’s perspective

Marketing blogs treat AI crawler access as a checkbox. From running our own monitoring stack, the more important signal is crawler stability over time: GPTBot’s request rate varies 5-10x between weeks, ClaudeBot prefers freshly-updated content, and Google-Extended honors `Crawl-delay` directives more strictly than regular Googlebot. If you’re investing in AI search visibility, log crawler hits per agent in your access logs for 4-6 weeks before drawing conclusions — one snapshot misleads.

The engineering trade-off most brands miss: llms.txt + schema markup yield measurable lift; chasing crawl-rate optimization is diminishing returns unless your site is enterprise-scale (10K+ pages). For most readers, the 6-item table above is where to spend the next 2-3 hours; deeper crawler tuning waits until you have baseline visibility data from Step 1.

For the deeper technical specifications — especially around schema markup choices, entity disambiguation, and llms.txt content selection — our companion GEO best practices for AI citations guide is the next read after this step.

Step 5 — Monitor, Measure, and Iterate

Steps 1-4 are project work. Step 5 is recurring — the monthly or weekly cadence that converts visibility from a one-time audit into a managed metric. Without a measurement loop, the gains from Steps 2-3 erode as competitors catch up, AI engines update their grounding models, and your content drifts out of date.

Three measurement levels match three operational maturities:

Level 1: Manual quarterly re-audit (free)

Re-run the same 20-30 queries from Step 1 every quarter. Track Trigger, Mention, and Citation rates over time. This is sufficient for brands testing whether AI search visibility is worth a paid tool investment — not for brands depending on AI search for revenue.

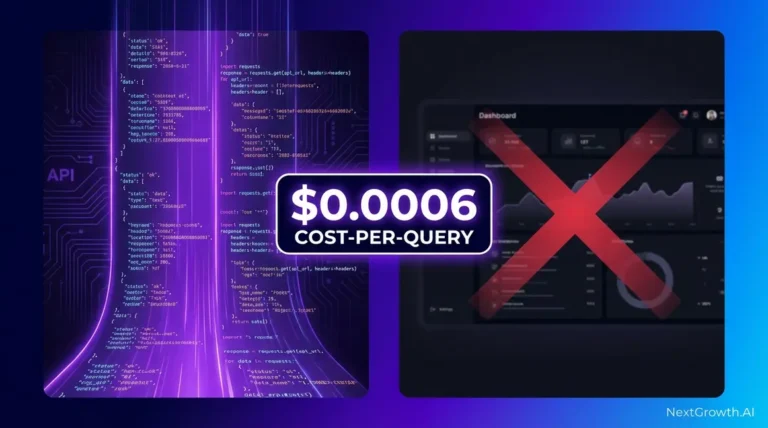

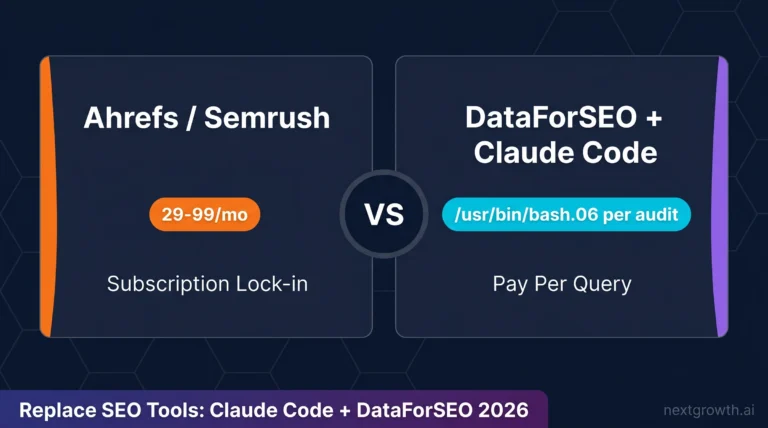

Level 2: Free or low-cost continuous monitoring

Otterly AI’s $29/month plan tracks 6 AI engines daily. Keyword.com’s $24.50/month plan offers hourly refresh on specific keywords. HubSpot AEO Grader can be re-run monthly for a baseline trend. Level 2 is the right fit for solo SEOs, small in-house teams, or agencies running their own brand — not yet client-managing at scale.

Level 3: Multi-engine enterprise monitoring + reporting

Platforms like Profound, AthenaHQ, Peec AI, and Rankability track 8-12 AI engines, support multi-brand and multi-client setups, expose competitor comparison, and offer white-label reporting. Pricing runs $99-499+/month. Level 3 is what an agency or an in-house team managing 5+ brands needs — the manual sheet from Level 1 collapses under that load.

For tool selection inside each level, our comparison guides are the next step: the 10-tool AI Overview rank tracker comparison covers the Level 2-3 tools by feature, pricing, and refresh frequency; the Perplexity rank tracker comparison goes deeper on Perplexity-specific tracking; and the broader SEO rank tracking software roundup covers traditional rank trackers that have added AI features. Some readers call these tools “ai search visibility checkers,” “ai search visibility trackers,” or “ai search visibility analysis tools” — same 10-tool universe, different vocabulary.

Reporting cadence that actually drives action. Monthly is the right cadence for most stakeholders. Quarterly is the right cadence for executive read-outs. Weekly is overkill unless you’re running a campaign or a launch. The trap is reporting weekly without anyone acting on the data — cadence should match decision velocity.

What Are the Ranking Factors for AI Search Visibility?

The exact ranking algorithms inside Google AI Overview, ChatGPT search, Perplexity, Gemini, and Claude are proprietary and undocumented. What we can observe through tracking thousands of queries (across our partner tooling and published case studies) is a consistent set of inferred factors, several of which align with the academic GEO: Generative Engine Optimization framework proposed by Aggarwal et al. (arXiv preprint 2023, updated 2024) — the most-cited primary research on optimizing content for generative engines. The list below ranks the factors roughly by observed impact:

| # | Factor | Why it matters |

|---|---|---|

| 1 | Brand mention frequency across high-authority domains | Trains the entity graph LLMs use to determine “is this brand an authority on X.” |

| 2 | Existing Google ranking for the query | Most RAG engines retrieve from top-ranked Google results first. No Google ranking, no citation. |

| 3 | Answer-first content structure | Pages where the answer appears in the first 100 words of each H2 get extracted more often than pages where the answer is buried. |

| 4 | Schema markup density | FAQPage, HowTo, Article, and Organization schema make content machine-extractable. |

| 5 | Recency of update | RAG engines favor recently updated content for queries with a temporal component (“2026,” “latest,” “current”). |

| 6 | Numeric stats with inline source citations | Stat-rich passages get extracted at higher rates — especially in answer panels that need to attribute claims. |

| 7 | AI crawler access (robots.txt + llms.txt) | Binary — if crawlers can’t access the content, nothing else matters. |

| 8 | Author credibility signals (E-E-A-T) | Author bios with credentials, LinkedIn links, and topic specialization get cited more on YMYL queries. |

Note that factors 1-2 are off-site and slow to move; factors 3-8 are on-site and can be improved in weeks. A common mistake is to focus entirely on on-site optimization without investing in the off-site entity work — which caps citation rate at a ceiling determined by your existing brand recognition.

What Do Top-Visibility Brands Do Differently?

The brands with the strongest AI search visibility we’ve observed across our partner tooling share five behaviors. None are individually surprising; the difference is they do all five consistently.

- They re-audit quarterly, not annually. AI engines update grounding models every 2-4 months. Annual audits miss algorithm shifts entirely.

- They prioritize Citation rate over Mention rate. Mentions without links don’t drive referral traffic. Citations do. The strongest brands accept fewer mentions if it means more citable structured passages.

- They run engine-specific optimization. AI Overview optimization is not the same as Perplexity optimization. Top brands tune content patterns per engine after baseline measurement.

- They publish original research at least twice a year. Original data points are the highest-cited content type across all AI engines. A 50-respondent industry survey beats a 5,000-word “ultimate guide” for citation rate.

- They invest in author entity authority alongside content. A bylined article from a recognized author cites 2-3x more than the same article published anonymously or as “Company Team.”

The best practices for improving brand visibility in AI search are sequential dependencies: technical foundation enables structural optimization, structural optimization enables citation, citation enables sustained mention rate, and sustained mention rate enables the entity authority that makes future citations easier. Skipping a step caps the next step.

What Common Mistakes Tank AI Search Visibility?

The patterns below come up routinely in first-audit findings. None of them are mysterious — they’re standard SEO mistakes whose impact is amplified in AI search because LLMs are unforgiving about ambiguity.

- Blocking AI crawlers in robots.txt. Often inherited from a “block all bots” rule. Audit your robots.txt for GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, and Google-Extended.

- Treating AI search as if it were Google. AI engines weight off-site brand mentions more heavily than Google does. On-site SEO alone caps your citation rate.

- Stuffing FAQ schema with marketing copy. LLMs filter out promotional language. FAQ schema should contain genuinely answer-shaped responses, not “We offer the best X” sales copy.

- Optimizing for the wrong engine. If your audience uses Perplexity but you spend 90% of effort on AI Overview, you’re investing in the wrong signal.

- Measuring once and moving on. The single biggest mistake. Visibility erodes if you stop measuring; competitors who measure continuously will outrun you.

AI Search Visibility FAQ

What is AI search visibility in 2026?

AI search visibility is your brand’s presence in answers generated by AI-powered search engines: Google AI Overview, Google AI Mode, ChatGPT search, Perplexity, Gemini, Claude, and Copilot. It is measured as three signals — Trigger (does the AI answer appear at all), Mention (does your brand name appear in the answer), and Citation (is your domain cited as a source). Strong AI search visibility means presence across all three.

Are there free AI search visibility checkers?

Yes. HubSpot’s AEO Grader gives a free one-time scan of your domain’s answer-engine signals. Otterly AI’s free tier covers limited prompt tracking across 6 AI engines. Google Search Console’s AI Overview filter (in the Performance report) surfaces queries where AI Overview appeared above your rankings. For a 20-30 query first audit, free checkers are sufficient before committing to a paid tool.

What strategies improve brand visibility in AI search engines fastest?

The fastest strategies are structural rewrites on pages already ranking top-10 on Google: answer-first H2 openings, definition + example + numeric-stat blocks per section, FAQPage schema on question-form queries, and internal linking to entity hubs. From a typical first-audit baseline of roughly 40% Trigger / 15% Mention / 4% Citation for mid-size B2B SaaS, these changes lift Citation rate 1.5-2x within 30-60 days without publishing any new content. For 3-5x lift, combine the structural work with off-site brand mention growth (PR, directories, podcast appearances) over 90-180 days.

How do I improve brand visibility in AI search engines without a paid tool?

Run a manual quarterly re-audit using the 20-30 query method from Step 1, optimize content using the four patterns in Step 2, and grow entity authority using the five tactics in Step 3. Free tools (HubSpot AEO Grader, Otterly free tier, Google Search Console) cover measurement. Paid tools accelerate the loop but are not required to improve visibility — they’re required to manage visibility at scale across many brands or domains.

What are the top AEO tools for AI search visibility analytics?

The category-leading AEO (Answer Engine Optimization) analytics tools in 2026 are Profound (enterprise-tier, 10+ AI platforms), AthenaHQ (widest AI engine coverage including Perplexity, ChatGPT, Gemini, Claude, and AI Overview), Peec AI (multi-platform brand monitor with Looker Studio export), Otterly AI (cheapest 6-platform native at $29/month), and SE Ranking’s SE Visible module (bundled inside a full SEO suite). For tool-by-tool reviews, see our AI Overview rank tracker comparison and Perplexity rank tracker comparison.

How is AI search visibility different from traditional SEO?

Traditional SEO optimizes for ranking position on a search engine results page (SERP); the optimization unit is the page, the measurement is rank position, and the win is a click-through. AI search visibility optimizes for inclusion inside an AI-generated answer; the optimization unit is the extractable passage, the measurement is Trigger/Mention/Citation, and the win can be a click (citation) or a no-click brand recall (mention). The two practices overlap on technical foundation (schema, crawlability, site speed) but diverge on content structure (passage-extractable vs. ranking-optimized) and authority signals (off-site brand mentions matter more in AI search).

How often should I audit AI search visibility?

Quarterly is the minimum. Monthly is the right cadence for brands depending on AI search for measurable revenue. Weekly is overkill unless you’re running a campaign or a product launch. AI engines update their grounding models every 2-4 months, so anything less frequent than quarterly misses algorithm shifts entirely.

Conclusion: Your 30-Day AI Search Visibility Roadmap

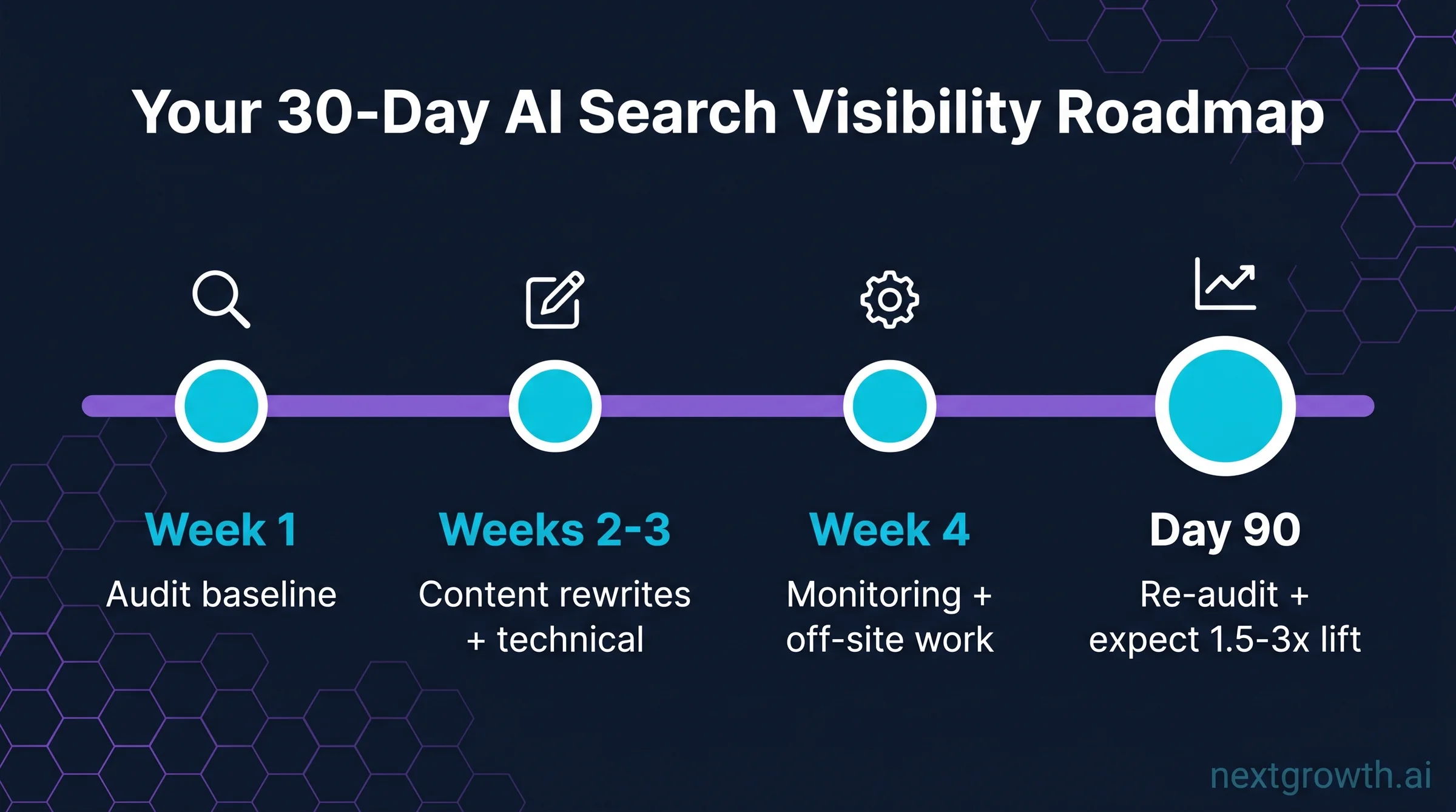

Five steps, sequenced as a 30-day starter plan. Re-audit at day 90 using the same query baseline you set in Week 1; expect 1.5-3x lift in Citation rate from a low starting baseline.

| Timeline | Activity | Steps |

|---|---|---|

| Week 1 | Run the audit and document baseline Trigger, Mention, and Citation rates across 20-30 representative queries. | Step 1 |

| Weeks 2-3 | Execute structural content rewrites on 5-10 top-trafficked pages and complete the technical foundation checklist. | Step 2 + Step 4 |

| Week 4 | Set up your monitoring stack and kick off the off-site entity work as a parallel 90-day track. | Step 5 + Step 3 |

| Day 90 | Re-audit using the same 20-30 queries; compare Trigger, Mention, Citation deltas; expect 1.5-3x Citation lift from a low baseline. | Step 1 (repeat) |

If you want tool selection guidance for the monitoring stack, the next reads in order of buyer stage are: 10 best AI Overview rank tracker tools compared (broad multi-engine), 10 best Perplexity rank tracker tools (Perplexity-deep), and 11 best SEO rank tracking software (traditional rank tracking with AI add-ons). For the technical specifications underneath Step 4, see GEO best practices for AI citations.

If you want a starting recommendation by scenario

These are tool suggestions based on the visibility-metrics framework above. The right choice depends on your audit results from Step 1 — do that first, then revisit this list.

- Just starting out: Run the Step 1 audit this week with HubSpot AEO Grader. Spend nothing until you have a baseline.

- Solo SEO or in-house team: Audit first; if baseline shows multi-engine gaps, Otterly AI at $29/month is the lowest-friction continuous tracker.

- Agency managing 5+ clients: Multi-brand reporting and white-label client deliverables are the bottleneck — SE Ranking + SE Visible at $95.20/mo bundles AI search tracking inside a full SEO suite with white-label support.

- Enterprise / many brands: Profound, AthenaHQ, and Peec AI offer the engine breadth and dashboard depth needed at that scale — expect $99-499+/month.