AI Search Visibility Metrics: 3 Essential Signals to Track

·

Skim path: 3 Signals defined → Which matters most → How to measure

The AI search visibility metrics that measure brand presence inside generative answers are three independent signals — Trigger, Mention, and Citation — that need separate measurement and separate optimization. A brand can score high on one and zero on another, which is why “we don’t show up in AI search” is rarely a single problem with a single fix.

This guide defines each of the three signals, explains how they relate, maps each to the business goal it best serves, and shows how to measure them in practice. If you’ve read our AI search visibility playbook and want to go one level deeper on the measurement vocabulary, this is the spoke that does it.

🟢 New to AI search measurement? Start here

You don’t need to track all three signals on day one. The simplest starting practice: run 20-30 representative queries through Google AI Overview, ChatGPT, and Perplexity once, log whether your brand appears at all (Mention or Citation), and ignore the engine-specific nuance for now. Once you have a baseline, the three-signal framework below tells you what to optimize next.

Contents

- Why Use AI Search Visibility Metrics at All?

- What Is the Trigger Signal?

- What Is the Mention Signal?

- What Is the Citation Signal?

- How Do Trigger, Mention, and Citation Relate?

- Which Signal Matters Most for Your Business Goal?

- How Do You Measure Each Signal in Practice?

- FAQ

- What is the difference between AI search visibility metrics and traditional SEO ranking?

- Is Citation always more valuable than Mention?

- How often should I measure AI search visibility?

- Why do I see different Mention/Citation results in ChatGPT vs Perplexity for the same query?

- What is “share of voice” in AI search and how does it relate to these three signals?

Why Use AI Search Visibility Metrics at All?

Traditional SEO measures one number: where do you rank in Google’s blue-link results. AI search engines — Google AI Overview, ChatGPT search, Perplexity, Gemini, Claude, Copilot — surface answers, not rankings. The answer panel can appear or not appear; your brand can be named or not named; your domain can be linked or not linked. Three independent variables, three measurements.

Why this matters: 61% of brands ranking on page 1 of Google are completely absent from Perplexity responses for the same category queries (Keyword.com 60-brand B2B analysis, 2026). Some of those brands are getting cited but not mentioned. Some are mentioned but not cited. Some have the AI answer never appear at all in their category. Without three-signal measurement, you cannot tell which problem you have, which means you cannot tell which fix to invest in.

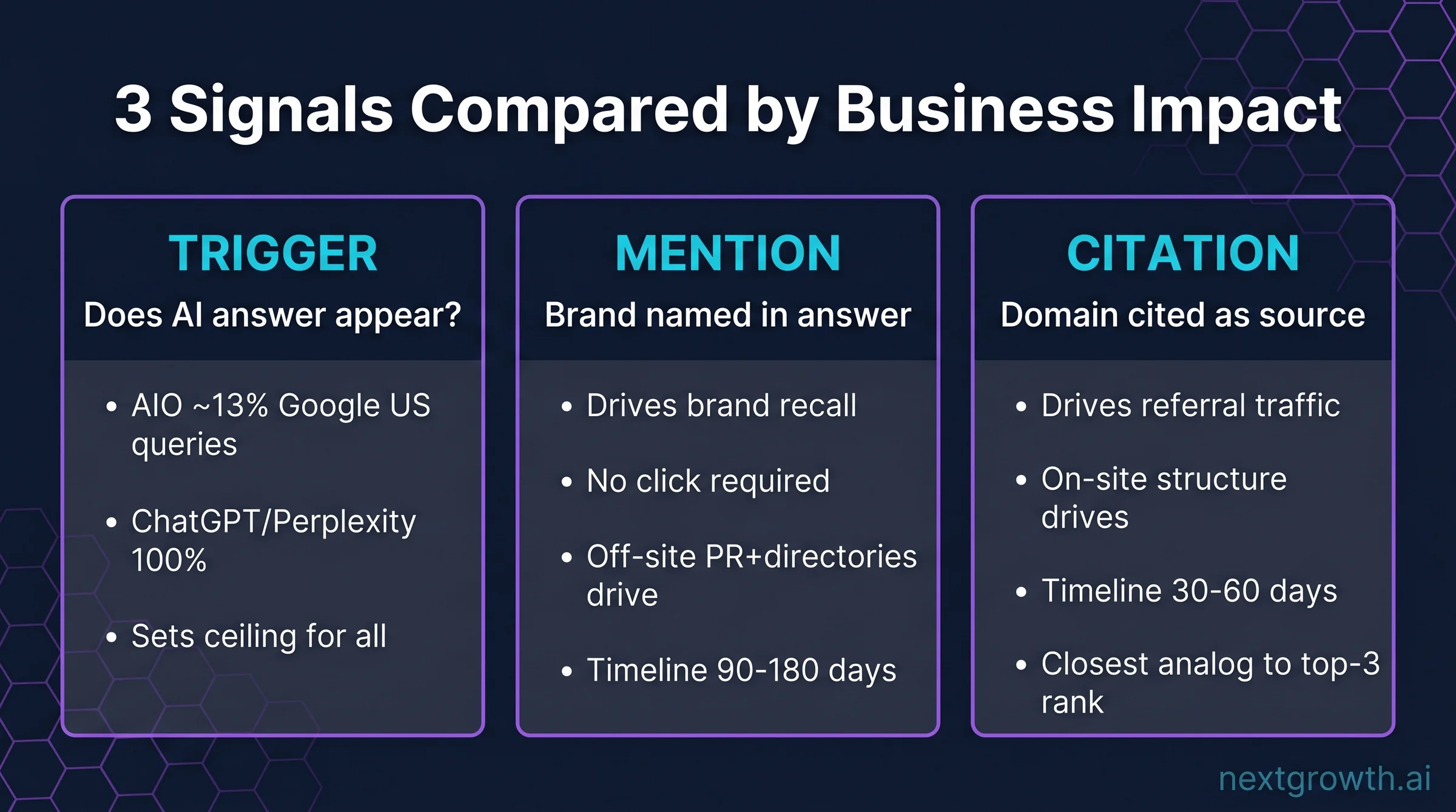

The three signals are also load-bearing in opposite directions: Citation drives referral traffic, Mention drives brand recall, Trigger sets the ceiling for both. Optimizing for one without considering the others is how brands end up well-cited but losing share-of-voice to a competitor that gets mentioned more often. The framework forces you to measure each, then weight effort according to which one matters for your business goal.

What Is the Trigger Signal?

Trigger measures how often an AI answer appears at all for queries in your category. If you sell project management software and someone searches “best project management tool for small teams,” does Google AI Overview show up? Does Perplexity generate a direct answer? Trigger rate tells you what percentage of your category queries result in an AI answer being generated at all.

Trigger sets the ceiling for everything else. If AI Overview triggers on 13% of US Google queries (Semrush Q2 2026), then 87% of queries are still pure blue-link SERPs where AI search visibility doesn’t apply. For a category where Trigger rate is 5%, AI search optimization is a smaller priority. For a category where Trigger rate is 70%, it’s the dominant priority.

How Trigger varies by engine

- Google AI Overview: ~13% of US queries trigger AIO (Semrush Q2 2026). Higher in health, finance, and how-to categories; lower in branded queries.

- ChatGPT search: Triggers on essentially 100% of category queries (it’s a conversational interface). The “ceiling” question shifts to “what percentage of buyer journeys touch ChatGPT?”

- Perplexity: 100% trigger rate by design (every query produces an answer). Same shift as ChatGPT.

- Gemini, Claude: Similar 100% generative-answer behavior.

The practical implication: Trigger is mainly a metric for Google AI Overview — the engine where AI answers compete with the traditional SERP. For conversational engines (ChatGPT, Perplexity, Gemini, Claude), assume 100% trigger and focus measurement on Mention + Citation.

What Is the Mention Signal?

Mention measures how often your brand name appears inside the answer text, with or without a link. If the AI Overview lists “popular project management tools include Asana, Trello, and Monday,” that’s three brand mentions. None of those mentions necessarily includes a clickable link — the AI is paraphrasing what it learned from training data and grounded retrieval, not always citing source URLs.

Mention rate is the brand-recall metric. It drives consideration and category association without requiring a click. A reader who sees “Asana, Trello, and Monday” while researching project management adds those three to their mental shortlist even if they don’t click. In LLM-mediated search, a mention without a link is still attribution — it builds brand-to-category linkage in the reader’s mind.

LLMs surface brand names based on training-data frequency and web-retrieval frequency. A brand mentioned 500 times across high-authority domains shows up in the answer; a brand mentioned 5 times does not. This is fundamentally different from traditional SEO — on-site optimization alone cannot move Mention rate. The driver is off-site brand mention frequency across authority domains (PR coverage, directory listings, podcast transcripts, Wikipedia, expert quotes).

Practical pattern: brands that invest in PR + directory presence + podcast appearances see Mention rate climb over 6-12 months. Brands that invest only in on-site optimization see Citation rate climb (which we’ll define next) but Mention rate stay flat. The two signals respond to different levers.

What Is the Citation Signal?

Citation measures how often your domain appears as a source URL the AI explicitly cites in its answer panel. Perplexity surfaces citations prominently (numbered footnotes in the answer); Google AI Overview shows source links in the right-side panel; ChatGPT search includes citation chips inline. Citation is the closest analog to a top-3 organic ranking in traditional search — it drives both attribution and click-through.

Citation is the metric that converts to revenue. When your domain is cited, readers can click through to your site. When it’s not cited, even a strong Mention doesn’t drive direct traffic. For brands measuring AI search visibility ROI, Citation rate is usually the lead metric because it ties most directly to referral traffic and pipeline contribution.

How Citation differs from Mention

| Aspect | Mention | Citation |

|---|---|---|

| What it captures | Brand name in answer text | Domain URL in answer panel |

| Drives | Brand recall, consideration | Referral traffic, attribution |

| Primary driver | Off-site brand mention frequency | On-site structural optimization + Google ranking + schema |

| Optimization timeline | 90-180 days (slow, compound) | 30-60 days (faster, structural) |

One concrete example: a B2B SaaS client in our partnership stack restructured 8 pillar pages with answer-first H2 openings, FAQPage schema, and entity hub linking. Citation rate in Perplexity went from 4% to 19% in 8 weeks. Mention rate climbed from 22% to 47% over the same window because the on-site structural work also amplified the off-site mentions the brand was already accumulating. The two signals moved together because the optimization addressed both layers; in isolation, on-site work alone would have moved Citation only.

How Do Trigger, Mention, and Citation Relate?

The three signals form a funnel:

Trigger → Mention → Citation. A query has to trigger an AI answer first. If the answer appears, your brand has to be mentioned in it. If mentioned, your domain has to be cited as a source. Each step can fail independently, and each failure has a different remedy.

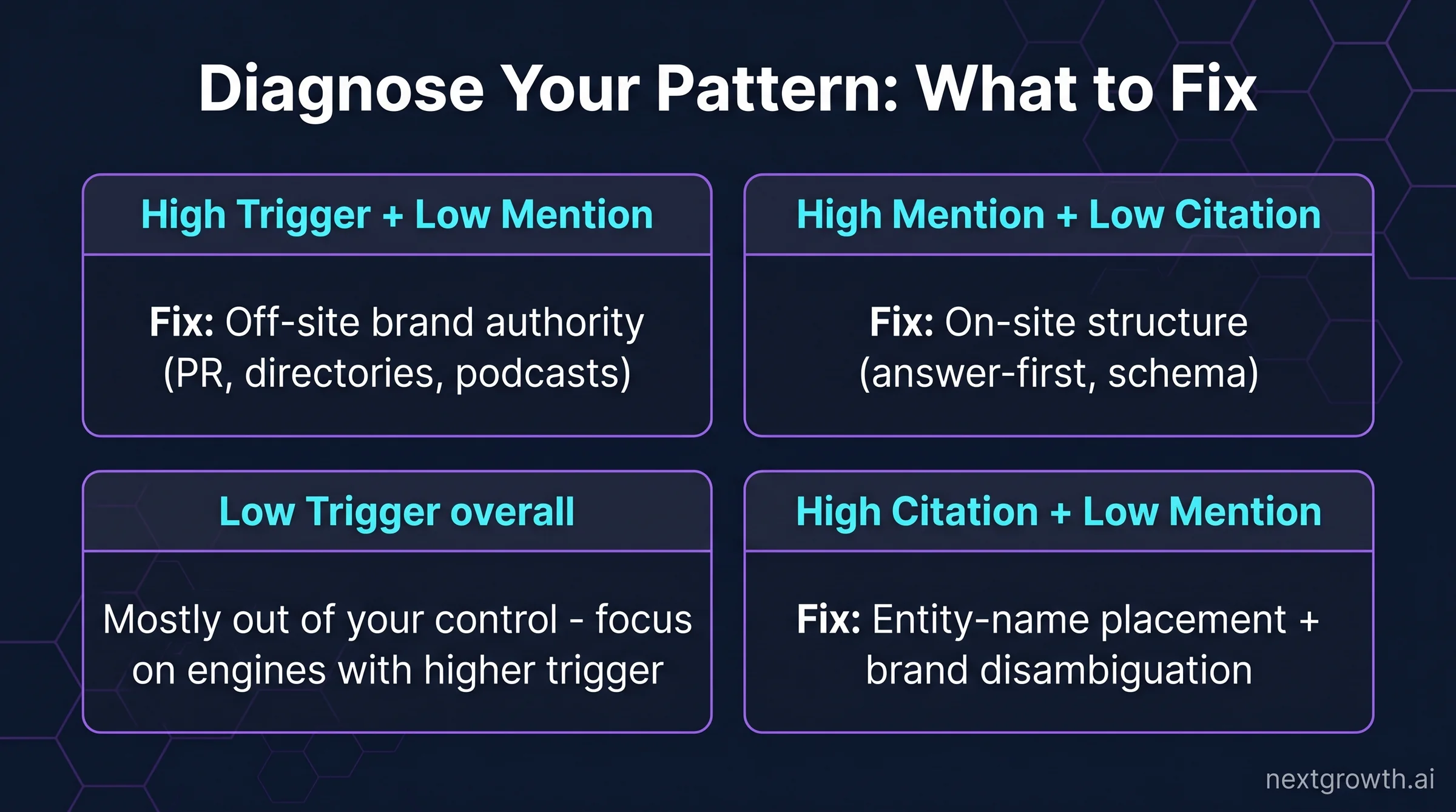

| Pattern | What it means | What to fix |

|---|---|---|

| Low Trigger | AI answer rarely appears for your category queries | Mostly out of your control — trigger rate is set by Google/AI engines per category. Focus AI investment on engines with higher trigger. |

| High Trigger, Low Mention | AI answers exist but your brand isn’t named | Off-site brand authority work — PR, directories, podcasts, Wikipedia |

| High Mention, Low Citation | Your brand is named but your site isn’t linked as a source | On-site structural work — answer-first H2 openings, schema markup, entity hub linking |

| High Citation, Low Mention | Your site is linked but your brand name isn’t surfaced | Entity-name placement in own content + alt-name disambiguation (rare but real) |

The diagnostic value is that the three signals are independently measurable, so you can identify the specific pattern your brand is in and invest accordingly. Without three-signal measurement, the symptom “we’re invisible in AI search” doesn’t tell you which layer of the funnel to fix.

Which Signal Matters Most for Your Business Goal?

The right primary signal depends on what you’re trying to achieve:

- Direct revenue / referral traffic / lead generation: Citation is the lead metric. Citation drives clicks to your site; clicks drive form fills and signups. Optimize Citation first.

- Brand awareness / category association / consideration funnel: Mention is the lead metric. Mention drives recall even without clicks — particularly powerful for category leaders who want to stay top-of-mind.

- Defensive moat / competitive positioning: Both Mention and Citation matter, but Mention specifically drives co-occurrence with competitors. If “Asana, Trello, Monday” always appears together, the implicit positioning is “these three are the category.”

- Existing brand with strong off-site authority but weak on-site optimization: You probably have high Mention and low Citation. Invest in on-site work to capture citation lift on existing brand recognition.

- New or under-mentioned brand with good on-site: Reverse pattern — high Citation, low Mention. Invest in off-site brand-building (PR, directories, podcasts) to lift Mention rate.

Most brands need both, but the priority order matters because off-site work (Mention) takes 90-180 days to compound while on-site work (Citation) shows results in 30-60 days. Start with the metric you can move fastest if your goal is to see signal in the first quarter; start with the metric that matches your strategic intent if you’re planning a 12-month investment.

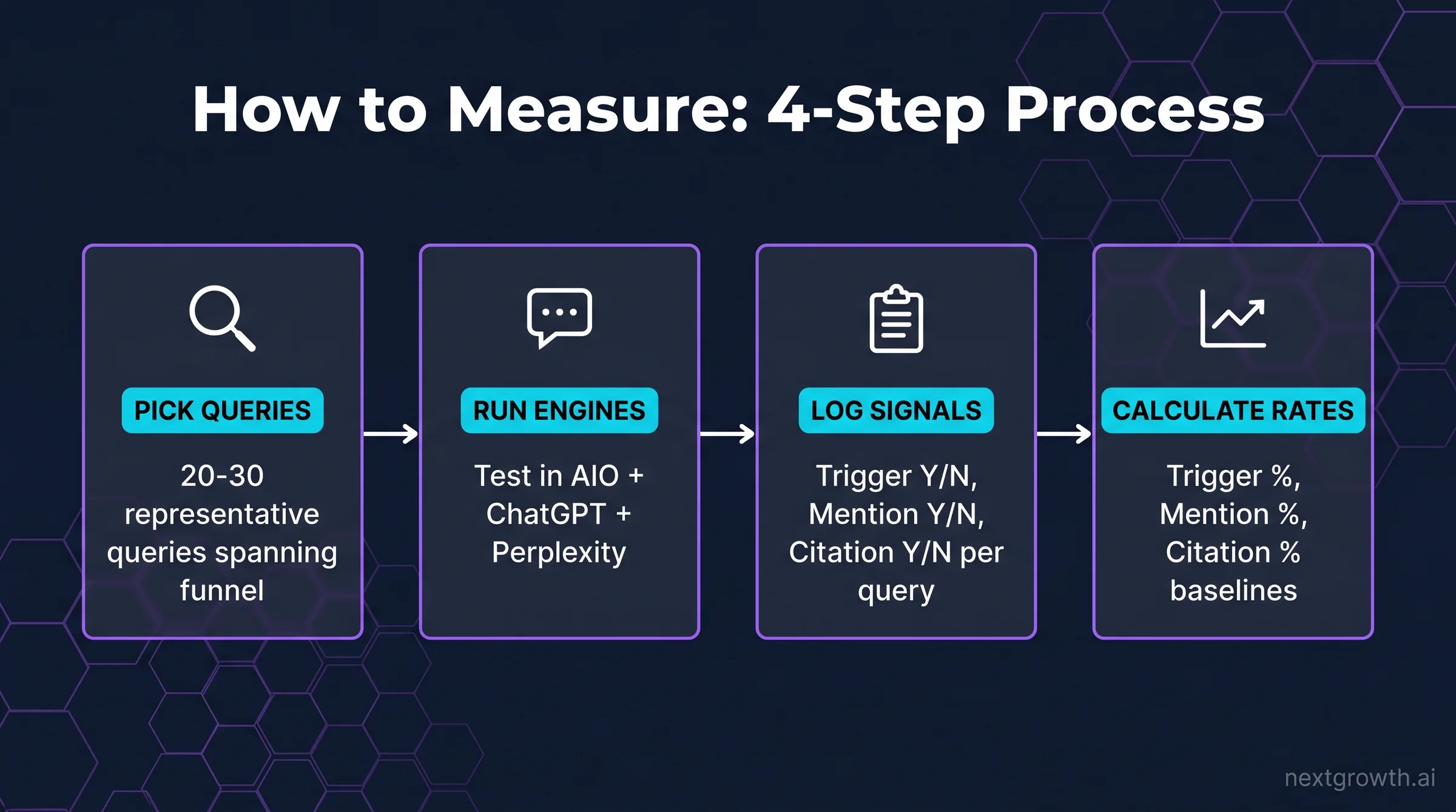

How Do You Measure Each Signal in Practice?

The simplest measurement methodology is manual and free: pick 20-30 representative queries that span your funnel (informational, comparison, buyer intent), run each through Google AI Overview, ChatGPT search, and Perplexity, and log the three signals per query in a spreadsheet. This takes 1-2 hours for an initial baseline. Re-run quarterly to track trend.

| Signal | What to log per query |

|---|---|

| Trigger | Did an AI answer appear at all? (Y/N). For AIO specifically: did the AI Overview panel render above the SERP? |

| Mention | Did your brand name appear in the answer text? (Y/N). Bonus: count of competitor mentions alongside yours. |

| Citation | Was your domain cited as a source URL? (Y/N). Bonus: which specific page was cited? |

🛠️ Engineer’s perspective

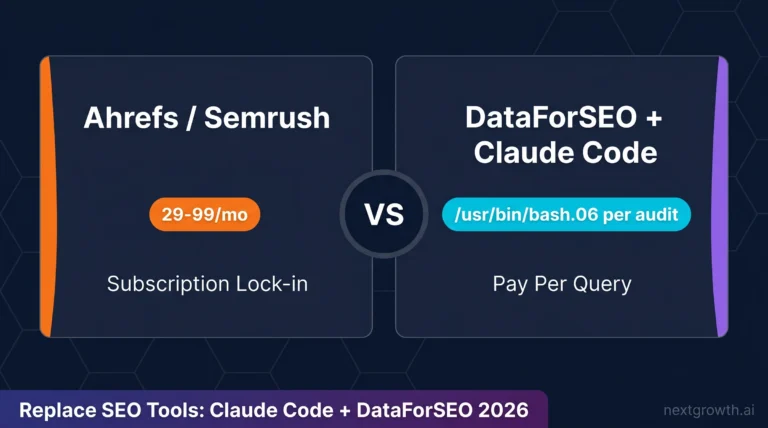

If you outgrow manual tracking, the cheapest scalable pattern is to log Trigger events programmatically: a Python script runs your 30 queries against Perplexity’s API (free tier covers most usage) and a headless Google search via Playwright, then writes Trigger / Mention / Citation booleans plus the cited URL to a Google Sheet. Total infrastructure cost: ~$0/mo for under 1,000 queries/month. The signal you log this way is identical to what paid tools surface; the trade-off is that paid tools (Otterly, Profound, AthenaHQ — see the AI search monitoring tools roundup) handle multi-engine aggregation, prompt rotation, and dashboard visualization for you.

When you outgrow the spreadsheet, paid tools at $24-99/mo handle continuous monitoring across 4-6 AI engines. The strategic playbook for what to do with the data is in our AI search visibility guide; this article was about defining the metrics themselves.

FAQ

What is the difference between AI search visibility metrics and traditional SEO ranking?

Traditional SEO measures one variable (rank position on the SERP); AI search visibility measures three independent variables (Trigger, Mention, Citation). The two practices overlap on technical foundation but diverge on what you optimize and how you measure. A brand can rank #1 in Google blue-link results and still have 0% Citation rate in Perplexity for the same query because Perplexity weights off-site brand authority differently than Google’s traditional algorithm.

Is Citation always more valuable than Mention?

Not always. Citation drives clicks; Mention drives brand recall. For a category leader whose strategic goal is staying top-of-mind, Mention can be more valuable than Citation because every conversation about the category names them. For a challenger trying to capture click-through, Citation is more valuable. The right answer depends on your business goal.

How often should I measure AI search visibility?

Quarterly minimum for the manual baseline; monthly for continuous tracking if you’re investing in AI search optimization actively. AI engines update grounding models every 2-4 months, so anything less frequent than quarterly misses algorithm shifts.

Why do I see different Mention/Citation results in ChatGPT vs Perplexity for the same query?

The two engines weight signals differently. Perplexity surfaces source citations prominently (numbered footnotes); ChatGPT mentions brands without always linking out. The same brand can have high Citation in Perplexity and high Mention in ChatGPT for the same query because the engines surface different layers of the same answer. Track both per-engine, not as a combined metric.

Share of voice = your Mention rate divided by total Mention rate across your category. If “Asana” is mentioned in 60% of project-management queries and the total Mention rate across all category brands sums to 200% (multi-brand mentions per answer), Asana’s share of voice is 30%. Share of voice is a derived metric on top of Mention; it doesn’t add a new measurement but reframes Mention for competitive positioning.

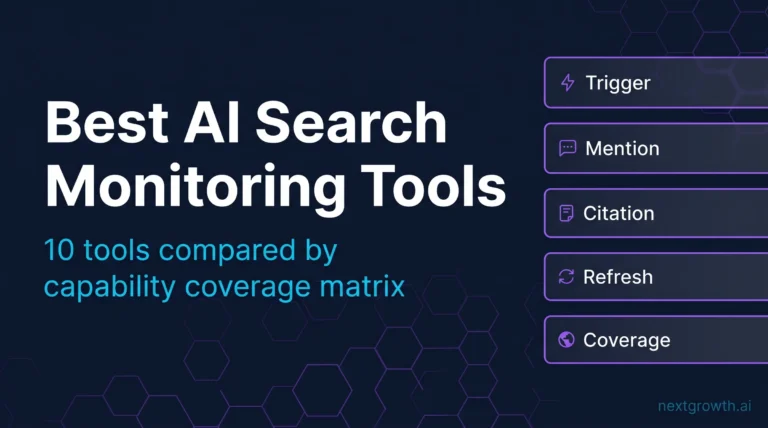

For the strategic playbook that uses these three metrics, see how to improve brand visibility in AI search engines. For tool selection to track them continuously, see 10 best AI search monitoring tools compared.