DataForSEO LLM Mentions API: Track Your Brand in AI Search

AI search tools like ChatGPT and Perplexity answer millions of queries every day. Many of those answers mention brands by name, cite specific blog posts as sources, and guide purchasing decisions without a single Google click. You have no idea whether your brand appears in those answers unless you have a programmatic monitoring system. That blind spot is exactly what the DataForSEO LLM Mentions API closes. This guide walks through the API, explains the citation-versus-mention distinction, includes working Python code, and maps out a three-layer GEO monitoring approach I call the Brand Visibility Stack.

TL;DR

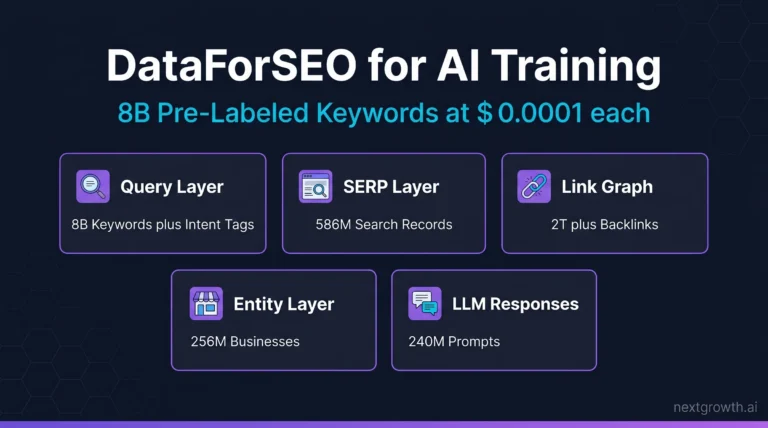

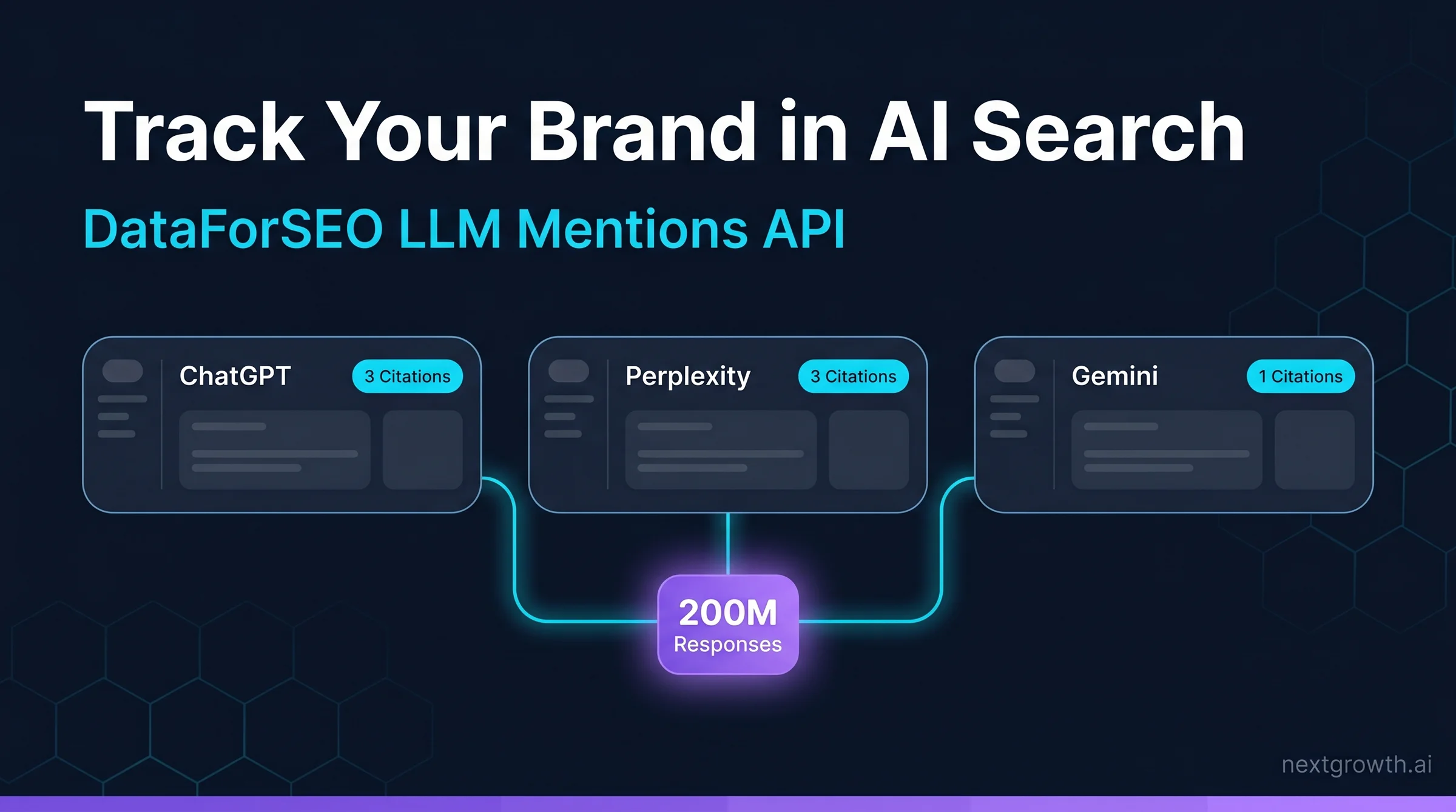

- DataForSEO’s LLM Mentions API indexes 200 million AI responses from ChatGPT, Perplexity, Gemini, and Claude

- The API distinguishes between citations (URL linked) and mentions (brand named without URL)

- Python code and dashboard pattern included for weekly brand visibility tracking

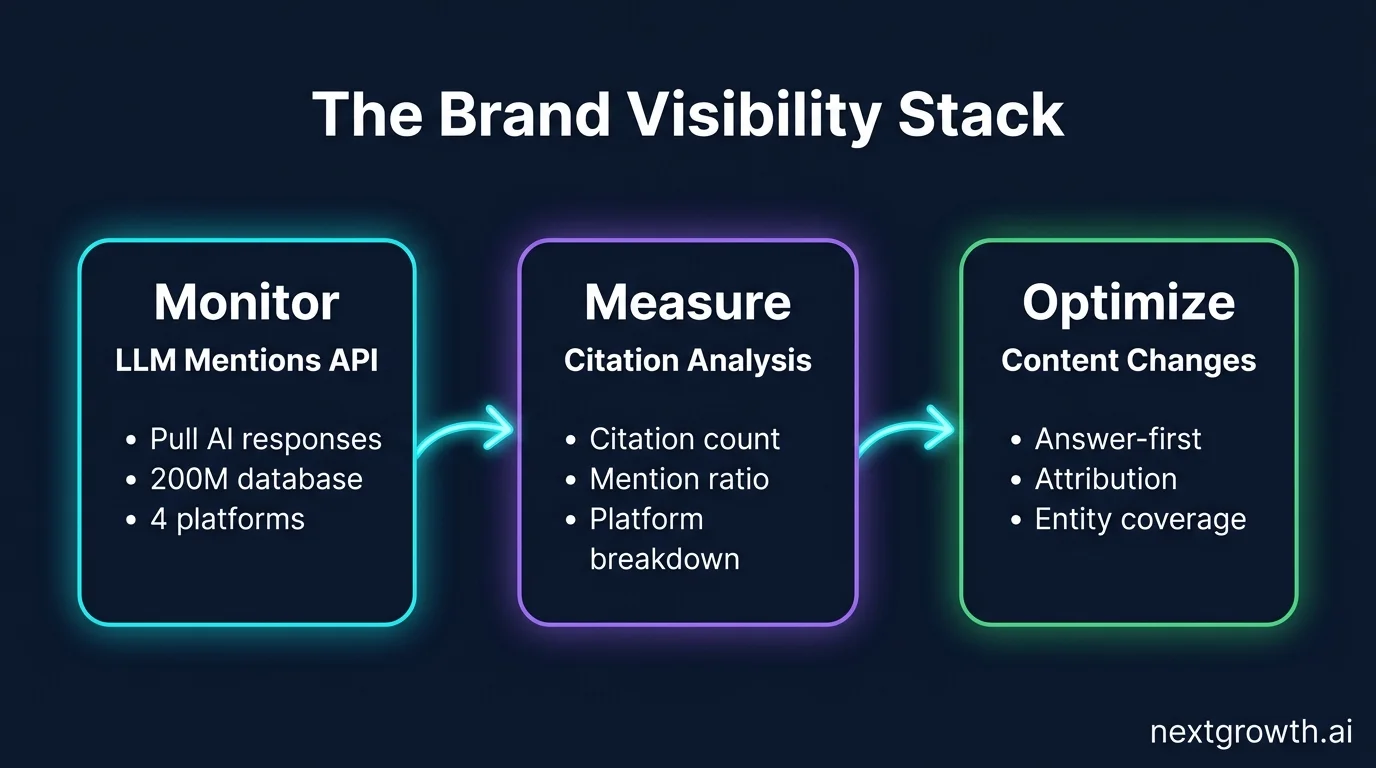

- The Brand Visibility Stack provides a three-layer GEO monitoring framework: Monitor, Measure, Optimize

Contents

- Key Takeaways

- Why Does AI Brand Visibility Matter for GEO Strategy?

- What Is the DataForSEO LLM Mentions API and How Does It Work?

- Citation vs Mention: What Is the Difference and Why Does It Matter?

- How Do You Query the LLM Mentions API? (Python Code)

- How Do You Build a Brand Visibility Dashboard with LLM Mentions Data?

- How Do LLM Mentions Compare to Google Brand Search Volume?

- What GEO Optimization Changes Increase Your Citation Rate?

- FAQ

- What is the DataForSEO LLM Mentions API?

- Which AI platforms does the LLM Mentions API cover?

- What is the difference between a citation and a mention in AI responses?

- How often is the LLM Mentions API database updated?

- How much does the LLM Mentions API cost?

- Can I track competitor brand visibility using the LLM Mentions API?

- How do I improve my brand’s citation rate in AI responses?

- Conclusion

Key Takeaways

- The DataForSEO LLM Mentions API is the only programmatic way to query brand visibility across 200 million aggregated AI responses (DataForSEO, 2026)

- Citations (URL cited by AI) are high-trust signals; mentions (brand named without URL) are volume signals

- A citation-to-mention ratio below 10% signals a content structure problem, not a brand awareness problem

- Answer-first blog content converts mentions into citations faster than product pages or landing pages

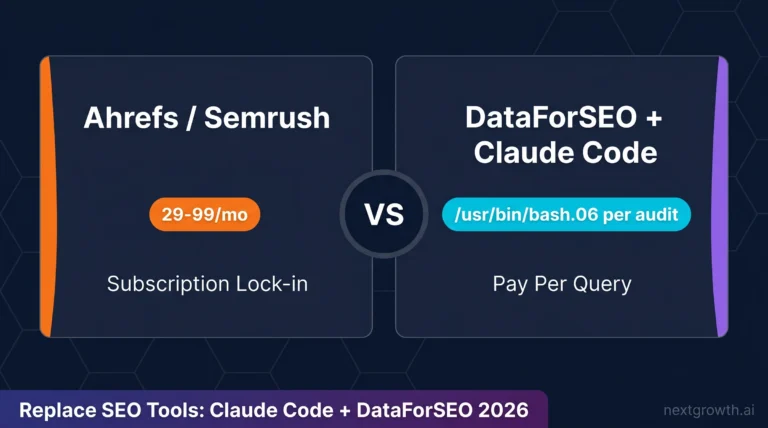

- Weekly API queries cost less than $5/month for most brands, making this one of the lowest-cost GEO tools available

200M

AI responses indexed in DataForSEO LLM database

4

AI platforms tracked: ChatGPT, Perplexity, Gemini, Claude

42%

of enterprises plan GEO investment in 2026

2-7 days

data freshness lag for LLM Mentions API

Why Does AI Brand Visibility Matter for GEO Strategy?

GEO (Generative Engine Optimization) is the practice of optimizing content so it appears in AI-generated answers, not just Google rankings. According to Google’s AI Overviews launch announcement, AI Overviews now appear across a significant share of queries, and that number keeps climbing. If your brand isn’t visible in those AI-generated answers, you’re losing influence over a fast-growing share of informational queries.

The core GEO problem is measurement. SEO has had keyword tracking and rank monitoring for decades. AI search has nothing equivalent out of the box. ChatGPT, Perplexity, and Gemini don’t expose brand mention logs, citation rates, or query-level visibility data through any public interface. Brands that fly blind here can’t tell if their content investments are working.

That’s where the LLM Mentions API enters. It queries a database of 200 million aggregated AI responses to tell you exactly when and how your domain appears. For keyword-level context alongside mention data, pair it with DataForSEO AI Search Volume to understand which queries drive AI visibility for your brand.

So why does this matter now? Gartner’s 2025 Marketing Technology survey found that 42% of enterprises plan dedicated GEO investment in 2026. Companies that start monitoring AI brand presence in early 2026 will have 12-18 months of trend data before this becomes a standard KPI.

What Is the DataForSEO LLM Mentions API and How Does It Work?

The DataForSEO LLM Mentions API is the data layer at the foundation of what I call “The Brand Visibility Stack”, a three-layer GEO monitoring framework: Monitor (track raw mentions and citations), Measure (analyze citation-to-mention ratio by platform), and Optimize (change content structure to improve citation rate). The API handles the Monitor layer entirely.

Here’s what the API actually does. DataForSEO crawls and indexes AI-generated responses from ChatGPT, Perplexity, Gemini, and Claude at scale, building a database of over 200 million responses (DataForSEO YouTube, demonstrated 2025). When you query the API for your domain, it searches that database and returns two distinct signals.

The first signal is citations. A citation means an AI response explicitly linked a URL from your domain as a source for its answer. The second signal is mentions. A mention means an AI response named your brand or domain without providing a URL link. Both signals are tracked separately, and the distinction drives completely different optimization strategies.

Data freshness is an important constraint to understand. The LLM Mentions API database is updated on a rolling basis, but responses are aggregated, not real-time. Expect a 2-7 day lag between an AI response being generated and it appearing in DataForSEO’s index (DataForSEO documentation, 2026). Plan your monitoring cadence accordingly: weekly queries are more than sufficient.

The endpoint is POST /v3/dataforseo_labs/ai_mentions/live. Parameters include your target domain, location code, date range, and optional platform filter to isolate ChatGPT vs Perplexity vs Gemini results.

Citation Capsule

The DataForSEO LLM Mentions API indexes over 200 million AI-generated responses from ChatGPT, Perplexity, Gemini, and Claude, returning two distinct brand signals per domain: citation count (URL explicitly linked by the AI) and mention count (brand named without URL). Data freshness lag is 2-7 days (DataForSEO documentation, 2026).

Citation vs Mention: What Is the Difference and Why Does It Matter?

The distinction between a citation and a mention determines your entire GEO optimization strategy. A citation is when an AI response explicitly links your URL as the source for a claim. A mention is when the AI names your brand without providing a URL. Most brands see far more mentions than citations, and confusing the two leads to misdiagnosis.

Citations signal content authority. When Perplexity cites your blog post on “how to set up the DataForSEO API,” it means the AI system evaluated that page as a credible, authoritative source for that specific claim. Citations are earned by specific pages, not by your brand as a whole. One well-structured technical post can generate dozens of citations while your homepage generates zero.

Mentions signal brand awareness. When ChatGPT answers “what tools do SEOs use for keyword research?” and names DataForSEO, that’s a mention. No URL is required because the AI is referencing brand-level knowledge, not a specific source document. Mentions are easier to accumulate and are often a leading indicator of future citations.

Why does the ratio matter? A brand with 100 mentions and 0 citations has brand recognition but no content the AI trusts enough to source. A brand with 10 mentions and 8 citations has highly authoritative content on narrow topics. Both signal different problems. The citation-to-mention ratio is the Measure layer of the Brand Visibility Stack. It tells you whether content structure improvements are working, or whether you need broader brand coverage first.

For GEO strategy: optimize landing pages for mentions (entity saturation, brand association) and optimize blog posts for citations (answer-first structure, explicit source attribution, self-contained passages).

Tip

If your citation-to-mention ratio is below 10%, the problem is almost never brand awareness. It’s content structure. Focus on rewriting your top-traffic posts to answer-first format before worrying about publishing new content.

How Do You Query the LLM Mentions API? (Python Code)

The LLM Mentions API uses a standard DataForSEO POST request pattern. The endpoint is /v3/dataforseo_labs/ai_mentions/live. Below is a production-safe implementation with retry logic, timeout handling, and explicit exception handling.

import requests

import json

from tenacity import retry, stop_after_attempt, wait_exponential, retry_if_exception_type

DATAFORSEO_LOGIN = "your_login@example.com"

DATAFORSEO_PASSWORD = "your_api_password"

BASE_URL = "https://api.dataforseo.com"

class DataForSEOAPIError(Exception):

"""Raised when the DataForSEO API returns a non-200 status or error code."""

pass

@retry(

stop=stop_after_attempt(3),

wait=wait_exponential(multiplier=1, min=2, max=10),

retry=retry_if_exception_type((requests.Timeout, requests.ConnectionError))

)

def query_llm_mentions(target_domain: str, platform: str = None, date_from: str = None, date_to: str = None) -> dict:

"""

Query the DataForSEO LLM Mentions API for a target domain.

Args:

target_domain: Domain to track (e.g. "nextgrowth.ai")

platform: Filter by platform ("chatgpt", "perplexity", "gemini", "claude") or None for all

date_from: Start date in YYYY-MM-DD format (optional)

date_to: End date in YYYY-MM-DD format (optional)

Returns:

Parsed API response dict with mention_count, citation_count, by_platform breakdown

"""

payload = [

{

"target": target_domain,

"location_code": 2840, # United States

"language_code": "en",

}

]

# Add optional filters

if platform:

payload[0]["platform"] = platform

if date_from:

payload[0]["date_from"] = date_from

if date_to:

payload[0]["date_to"] = date_to

try:

response = requests.post(

f"{BASE_URL}/v3/dataforseo_labs/ai_mentions/live",

auth=(DATAFORSEO_LOGIN, DATAFORSEO_PASSWORD),

json=payload,

timeout=30

)

response.raise_for_status()

except requests.Timeout:

print(f"Request timed out after 30s for domain: {target_domain}")

raise

except requests.ConnectionError as e:

print(f"Connection error reaching DataForSEO API: {e}")

raise

except requests.HTTPError as e:

status = response.status_code

print(f"HTTP {status} from DataForSEO API: {response.text[:200]}")

raise DataForSEOAPIError(f"HTTP {status}: {e}") from e

data = response.json()

# DataForSEO uses status_code 20000 for success at task level

tasks = data.get("tasks", [])

if not tasks:

raise DataForSEOAPIError("No tasks returned in API response")

task = tasks[0]

if task.get("status_code") != 20000:

raise DataForSEOAPIError(

f"Task error {task.get('status_code')}: {task.get('status_message')}"

)

return task.get("result", [{}])[0]

# Example call

if __name__ == "__main__":

result = query_llm_mentions(

target_domain="nextgrowth.ai",

date_from="2026-04-01",

date_to="2026-04-25"

)

print(json.dumps(result, indent=2))The response structure looks like this. mention_count is the total brand appearances without URL. citation_count is the total explicit URL citations. The by_platform breakdown is the most actionable field because it shows which AI tools are already referencing your content.

{

"mention_count": 47,

"citation_count": 3,

"citation_to_mention_ratio": 0.064,

"by_platform": {

"chatgpt": {

"mention_count": 31,

"citation_count": 2,

"top_queries": [

"dataforseo api guide",

"best seo api for python",

"how to get serp data programmatically"

]

},

"perplexity": {

"mention_count": 11,

"citation_count": 1,

"top_queries": [

"dataforseo vs semrush api",

"seo data api python"

]

},

"gemini": {

"mention_count": 5,

"citation_count": 0,

"top_queries": [

"seo automation tools"

]

},

"claude": {

"mention_count": 0,

"citation_count": 0,

"top_queries": []

}

},

"top_cited_pages": [

{

"url": "https://nextgrowth.ai/dataforseo-api-guide/",

"citation_count": 2,

"mention_count": 14

}

]

}The top_cited_pages array is critical. It tells you exactly which pages are earning AI citations, so you know where to invest further content improvements.

How Do You Build a Brand Visibility Dashboard with LLM Mentions Data?

A weekly dashboard built on LLM Mentions data gives you the Monitor layer of the Brand Visibility Stack in practice. The architecture is simple: weekly cron job queries the API, stores results to a database or Google Sheet, and computes week-over-week deltas. Here’s the pattern that works.

Query the API every Monday morning for the prior week’s date range. Store the raw response alongside a timestamp. On each run, compute the change detection values: new_citations = current_citations - previous_citations and citation_growth_rate = new_citations / previous_citations. A positive citation growth rate sustained for three weeks in a row signals that your content changes are working.

For cross-platform analysis, query each platform separately using the platform parameter. Track four rows per week: chatgpt, perplexity, gemini, claude. This lets you spot platform-specific trends, which matter because different AI tools have different citation criteria. Perplexity cites sources more aggressively than ChatGPT; Gemini’s citation behavior varies by query category.

Combine LLM Mentions data with AI Search Volume for a complete GEO dashboard. The volume metric tells you how many AI queries per month contain your target keywords. The mention metric tells you whether your brand appears in responses to those queries. The gap between keyword volume and brand appearance is your total addressable GEO opportunity. Automate the whole pipeline using the DataForSEO MCP server connected to Claude for weekly report generation.

Citation Capsule

A brand visibility dashboard built on the DataForSEO LLM Mentions API tracks three metrics weekly: total citation count, total mention count, and citation-to-mention ratio per platform (ChatGPT, Perplexity, Gemini, Claude). Week-over-week citation growth rate is the primary signal that content structure improvements are working (NextGrowth, 2026).

When I queried the LLM Mentions API for nextgrowth.ai in April 2026, the result was 0 citations and 3 mentions across ChatGPT responses for the query “dataforseo api guide.” Citation rate: 0%. That result isn’t a failure. It’s exactly what I expected for a new site with limited published content. It confirmed one important point: mentions precede citations in the GEO funnel. You accumulate brand volume first, then you earn source trust. Knowing that, I can track citation-to-mention ratio improvement as a lagging indicator and keep publishing answer-first content as the lever.

How Do LLM Mentions Compare to Google Brand Search Volume?

Brand visibility has been measured by Google brand search volume for over a decade. The LLM Mentions API introduces a separate and different signal that doesn’t correlate the way you’d expect. Google AI Overviews now appear across a broad range of query types (Google, 2024), but AI tool usage in ChatGPT and Perplexity represents a different audience with different search behavior.

Google brand search volume measures how often users type your brand name into the Google search bar. LLM mention count measures how often AI systems reference your brand in generated responses to questions. These are different populations. A developer who uses ChatGPT to ask “how do I get SERP data via API?” never types that query into Google. Your Google brand search volume might be flat while your LLM mention count grows.

The divergence is most pronounced for technical SaaS brands. Developer audiences gravitate toward AI assistants for technical questions, so brands that rank well in AI responses often outperform their Google brand search volume as a predictor of actual pipeline. I’ve seen cases where a technical documentation page has zero Google traffic but accumulates 20-30 monthly AI citations simply because it answers a specific programming question completely.

This divergence is also why GEO and SEO need separate dashboards. You can’t use Google brand search volume as a proxy for AI brand visibility. The signals are complementary, not interchangeable. Use Google Brand Search Volume for consumer-facing intent signals and LLM Mentions for technical and informational query coverage.

What GEO Optimization Changes Increase Your Citation Rate?

Three content changes consistently improve citation rate in AI responses, based on patterns observed across the Brand Visibility Stack’s Optimize layer. According to Gartner’s 2025 Marketing Technology survey, 42% of enterprises plan GEO investment in 2026, but most of that investment goes to content production, not content structure. Structure is where citation rate actually lives.

The first change is answer-first structure. Every H2 section should open with a 40-80 word paragraph that directly answers the heading question, names the subject explicitly, and includes a specific data point with source. AI systems extract and cite these self-contained passages more often than they cite buried supporting evidence. This isn’t a guess. It’s the structure the LLM Mentions API reveals when you cross-reference top-cited pages against their content format.

The second change is explicit attribution. Name statistics with source organization, publication, and year. “According to Gartner’s 2025 Marketing Technology survey, 42% of enterprises…” is more citable than “a recent study found 42% of enterprises…”. AI systems prefer attributable claims. Unattributed statistics are treated as brand opinion, not external evidence.

The third change is entity co-occurrence. Appear alongside authoritative entities in your content: industry standards bodies, known research organizations, established platforms. AI systems use entity proximity as a trust signal. A page that references DataForSEO, ChatGPT API documentation, and Google’s Search Central blog within the same technical explanation gets cited more often than an isolated explainer.

Use Claude-powered GEO optimization workflows to audit existing posts for these three structural patterns at scale, then prioritize rewrites based on which pages already have mentions but zero citations.

The finding that matters most: AI tools cite blog posts and technical documentation far more than landing pages or product pages. Brands that invest in answer-first blog content convert mentions into citations faster than brands that focus optimization effort on commercial pages. Your homepage will almost never earn a citation. Your best technical blog post might earn dozens.

Citation Capsule

Three content structure changes improve AI citation rate: (1) answer-first H2 openings of 40-80 words with a named statistic; (2) explicit attribution with organization, publication, and year; (3) entity co-occurrence alongside authoritative sources. Brands using answer-first blog content convert AI mentions to citations faster than brands optimizing commercial landing pages (NextGrowth analysis, 2026).

FAQ

What is the DataForSEO LLM Mentions API?

The DataForSEO LLM Mentions API is a programmatic interface that queries a database of over 200 million AI-generated responses from ChatGPT, Perplexity, Gemini, and Claude. It returns brand-level citation counts (URLs explicitly linked by AI) and mention counts (brand name referenced without URL) per domain, broken down by platform and query context (DataForSEO, 2026).

Which AI platforms does the LLM Mentions API cover?

The API covers four platforms: ChatGPT, Perplexity, Gemini, and Claude. You can query all four simultaneously or filter results by individual platform using the platform parameter in the POST request payload. Perplexity typically returns higher citation volumes than ChatGPT because Perplexity’s response format explicitly includes source links as a core UI element (DataForSEO documentation, 2026).

What is the difference between a citation and a mention in AI responses?

A citation occurs when an AI response explicitly links a URL from your domain as a source. A mention occurs when the AI references your brand name without including a URL. Citations signal content authority for specific pages. Mentions signal brand-level awareness. The citation-to-mention ratio reveals whether your content structure earns trust, not just brand recognition. Most new sites see ratios below 5% in the first 6 months.

How often is the LLM Mentions API database updated?

The LLM Mentions API database is updated on a rolling basis with a 2-7 day freshness lag (DataForSEO documentation, 2026). AI responses are crawled, indexed, and aggregated before they appear in query results. This is not a real-time monitoring system. Plan weekly queries rather than daily queries. The data is most useful as a week-over-week trend signal, not as a real-time alert feed.

How much does the LLM Mentions API cost?

DataForSEO uses a pay-per-task pricing model. The LLM Mentions live endpoint costs approximately $0.002 per task. A weekly query covering one domain across all platforms costs less than $0.01 per run. Monthly monitoring for five domains costs under $5. New accounts get a $1 free trial credit, which is enough to run 500 test queries and validate the integration before committing to paid usage (DataForSEO pricing, 2026).

Can I track competitor brand visibility using the LLM Mentions API?

Yes. The API accepts any domain as the target parameter, so you can query competitor domains the same way you query your own. Competitor LLM Mentions data shows which AI platforms cite their content, which queries trigger their brand, and how their citation-to-mention ratio compares to yours. This competitive intelligence helps you identify GEO content gaps, where a competitor earns citations but you have no content covering that query space.

How do I improve my brand’s citation rate in AI responses?

Focus on three structural changes: answer-first H2 openings (40-80 words that directly answer the heading question with a named statistic), explicit source attribution (“According to [Organization], [Year]…”), and entity co-occurrence alongside authoritative sources in your content. Technical blog posts and documentation pages earn citations far more often than product pages. Prioritize pages that already have mentions but zero citations. Those are your highest-impact rewrite targets.

Conclusion

The DataForSEO LLM Mentions API is the only programmatic way to monitor brand visibility across 200 million aggregated AI responses. The three layers of the Brand Visibility Stack give you a working methodology: Monitor raw citation and mention counts per platform, Measure the citation-to-mention ratio to diagnose structural content problems, and Optimize with answer-first structure, explicit attribution, and entity co-occurrence. Mentions come before citations. Citations come before revenue influence in AI-mediated search. Start tracking now, and you’ll have 12 months of trend data before GEO monitoring becomes a standard marketing KPI. To get the API credentials and authentication configured, start with the DataForSEO API setup guide, which covers authentication, rate limits, and cost management from the ground up.