8 GEO Best Practices to Get Cited by ChatGPT, Perplexity & AI Overviews in 2026

Perplexity cites 2.76x more sources per question than ChatGPT, according to Qwairy’s Q3 2025 citation analysis. Your content could be one of those sources — if it’s structured correctly.

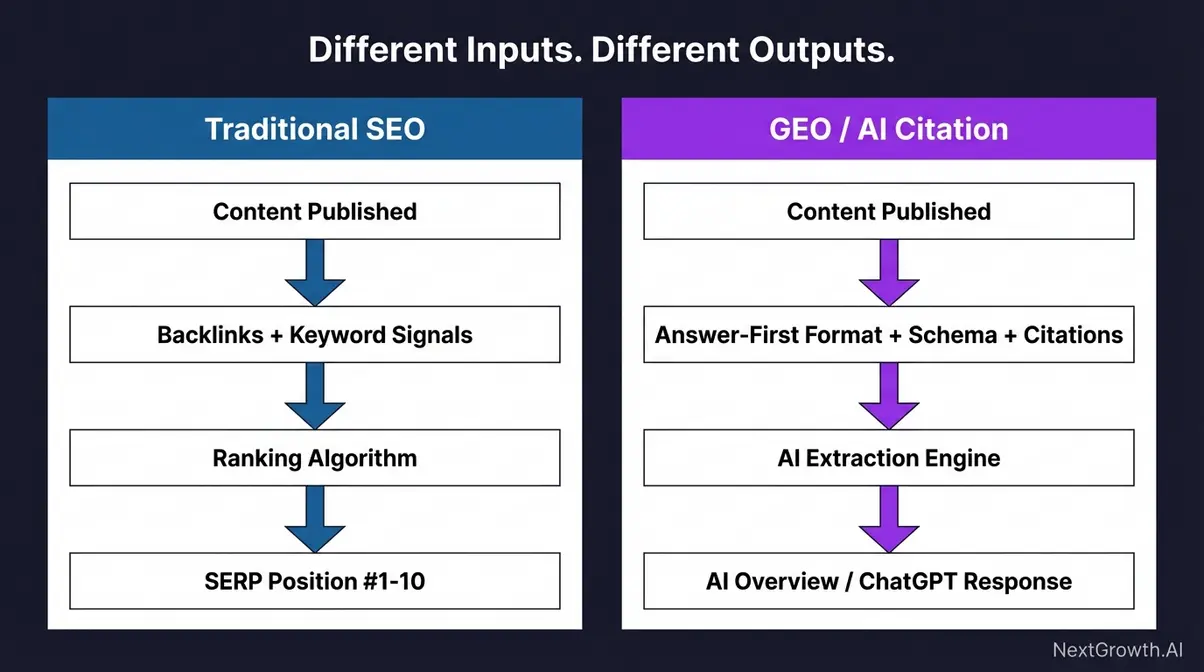

Most websites aren’t built for AI citations. They’re built for ten blue links. That means they’re invisible to the AI search engines now handling a growing share of queries. Gartner’s 2023 prediction that traditional search volume would drop 25% by 2026 is playing out right now — AI answer engines are capturing queries that once drove clicks.

Generative Engine Optimization — GEO — is the practice of structuring content so AI systems can find, understand, and cite it. Think of it as what SEO was in 2010: early, messy, and disproportionately rewarding for sites that figure it out first.

Here are the 8 practices that make the difference:

- Write AI-citable passages

- Use stat + consequence pairings

- Use question-style headings

- Front-load entity and brand definitions

- Allow AI crawlers in robots.txt

- Serve an llms.txt file

- Ensure server-side rendering

- Add structured data (schema markup)

GEO optimization gets your content cited by ChatGPT, Perplexity, and Google AI Overviews. Roughly 25% of Google searches now trigger AI Overviews, and 52% of Americans find AI summaries useful (Pew Research, 2025). These 8 practices — grouped into a four-level Citation-First Hierarchy — work across all three platforms. Structure your content for AI extraction, or watch competitors claim citations you could have earned.

Contents

- What Is GEO and Why Does It Matter in 2026?

- How Do AI Search Engines Choose What to Cite?

- 8 GEO Best Practices That Get You Cited

- Which Practices Matter Most by Platform?

- Common GEO Mistakes That Kill Your Citability

- How Do You Measure GEO Performance?

- Where Do AI Models Find Sources Beyond Your Blog?

- How Does SEVOsmith Automate All 8 GEO Practices?

- What Can’t Be Automated in GEO (And What to Do Instead)?

- Frequently Asked Questions

- Conclusion: GEO Is Where SEO Was in 2010

What Is GEO and Why Does It Matter in 2026?

Fifty-two percent of Americans say they find AI-generated summaries in search results at least somewhat useful (Pew Research, 2025). That audience is growing fast. GEO is how you make sure your content is the one doing the answering — not your competitor’s.

GEO means structuring your content so AI-powered search tools can pull out key passages and cite your site. ChatGPT, Perplexity, Google AI Overviews — they all work this way. It’s not about ranking in a list of blue links anymore. It’s about being quoted inside the AI answer itself.

Researchers at Princeton University formally established GEO as a field in their 2024 paper, demonstrating that specific content optimization techniques could increase visibility in generative engine results by up to 40% (Princeton University, 2024). That study put academic rigor behind what practitioners were already noticing: AI search engines don’t read pages the way humans do.

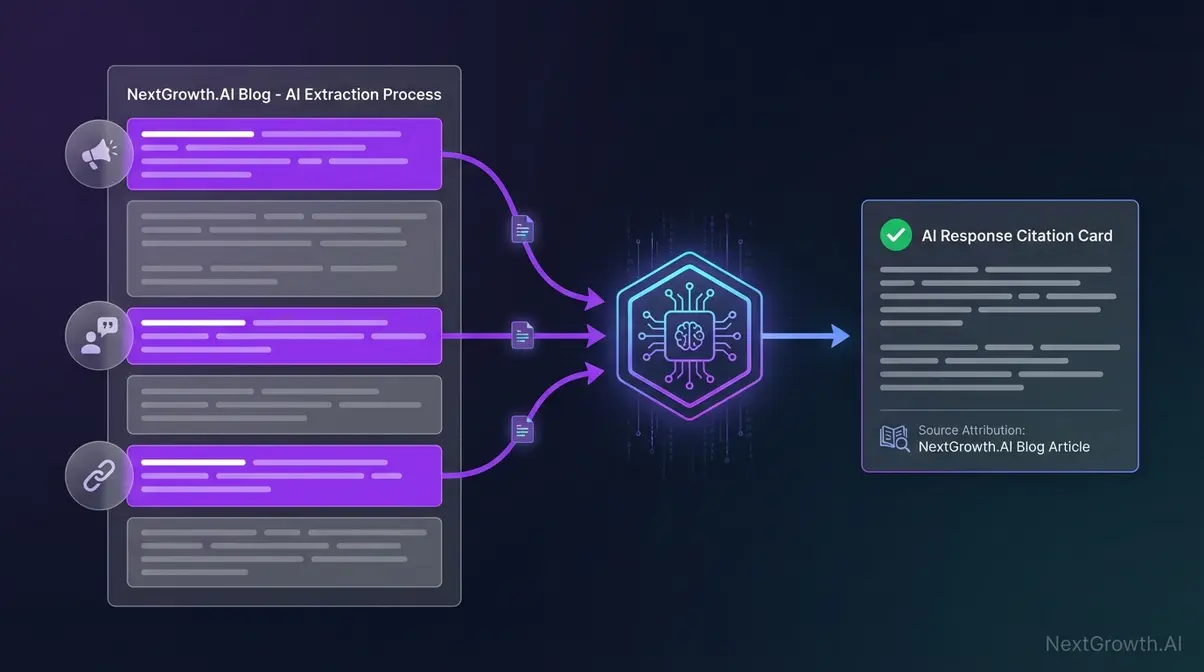

Here’s how it works in practice. A user asks a question. The AI engine scans the web for matching pages. It pulls out passages that answer the query. Then it builds a response and links back to the sources it used.

The key part: AI scans for short, factual passages it can quote on their own. If your content reads as one long flow without standalone statements, AI can’t extract anything useful. Even great writing gets skipped when there’s nothing clean to pull.

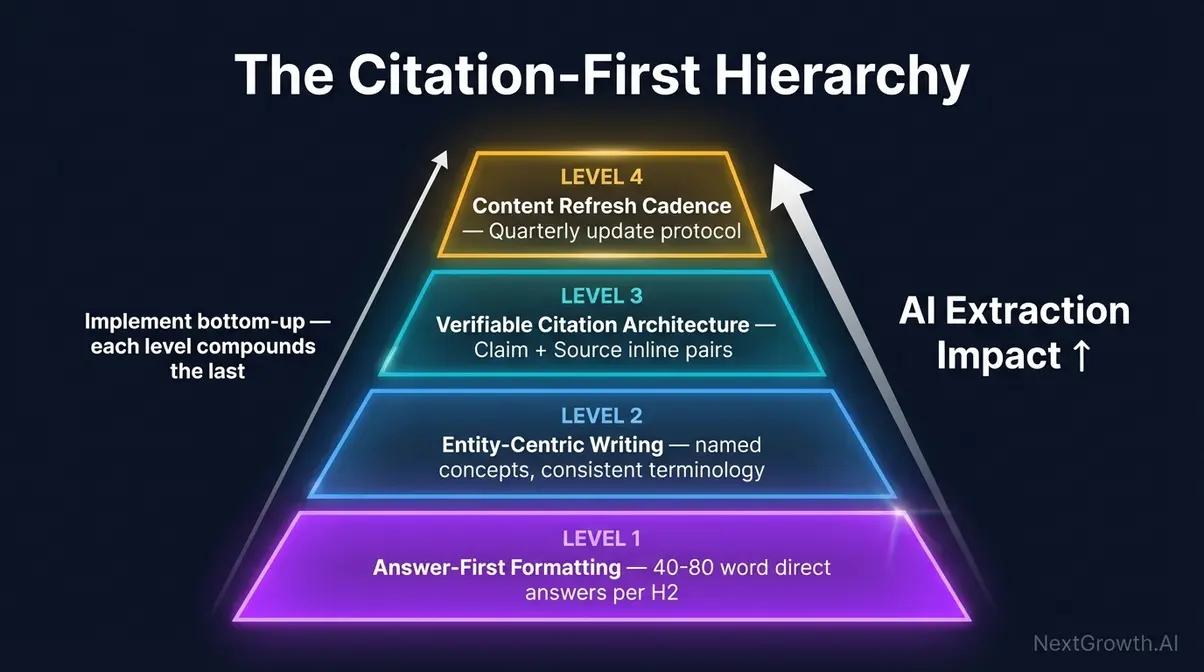

The Citation-First Hierarchy

We’ve found it helpful to think about GEO practices in four levels, each building on the last:

- Level 1 — Answer-First Formatting: How you write individual passages and headings

- Level 2 — Entity-Centric Writing: How you define brands, products, and concepts

- Level 3 — Technical Infrastructure: How crawlers access and read your content

- Level 4 — Structured Data & Schema: How machines understand your content’s metadata

The 8 practices below map to these four levels. You don’t need to nail all four overnight. But skipping Level 3 (blocking crawlers) will cancel out everything you do at Levels 1 and 2.

Princeton University’s 2024 research formally established GEO as a field, showing that optimized content can achieve up to 40% higher visibility in generative engine results. With 52% of Americans finding AI summaries useful (Pew Research, 2025), the audience for AI-cited content is already mainstream.

How Do AI Search Engines Choose What to Cite?

Citation frequency accounts for roughly 35% of what determines whether your content appears in AI answers (Search Engine Land, 2025). But each platform picks sources differently. Understanding those differences turns GEO from guesswork into strategy. A solid SEO competitor analysis helps identify where rivals already earn AI citations.

Perplexity: The Citation Machine

Perplexity cites an average of 21.87 sources per answer (Qwairy, Q3 2025). That’s nearly three times more than ChatGPT. It favors in-depth, well-organized content with clear terminology and multiple sub-topics.

Long articles with distinct headings perform well because Perplexity pulls from several sections of the same page. If you write a 3,000-word guide with eight H2 sections, Perplexity might cite four of them in a single answer.

What wins on Perplexity: depth, structure, and topical breadth.

ChatGPT: Authority First

ChatGPT cites 7.92 sources per answer (Qwairy, Q3 2025) and drives the largest share of AI referral traffic. It favors trusted domains with Wikipedia-style layouts — clear definitions, packed with facts, and strong trust signals.

Commercial queries trigger ChatGPT’s web search 53.5% of the time, compared to just 18.7% for informational queries (Quattr, 2025). That means product-related and “best of” content has the biggest citation window. In fact, “Best X” listicles make up 43.8% of all page types ChatGPT cites (Quattr, 2025).

What wins on ChatGPT: authority signals, brand clarity, and commercial relevance.

Google AI Overviews: SEO + Schema

Roughly 25% of Google searches now trigger AI Overviews (Exposure Ninja, 2025). Health queries trigger them most frequently, followed by technology queries. Unlike ChatGPT and Perplexity, AI Overviews strongly favor content that already ranks on page 1 — and has schema markup.

What wins on AI Overviews: traditional SEO fundamentals combined with structured data. You don’t get to skip the basics here.

AI platforms differ sharply in citation behavior. Perplexity pulls from 21.87 sources per answer while ChatGPT uses 7.92 (Qwairy, Q3 2025). Only 11% of domains get cited by both platforms, meaning most sites need platform-specific GEO strategies to maximize their reach.

| Platform | Avg. Sources per Answer | Top Content Type | Key Signal |

|---|---|---|---|

| Perplexity | 21.87 | Long-form guides | Depth + structure |

| ChatGPT | 7.92 | “Best X” listicles | Authority + brand |

| AI Overviews | Varies | Page 1 rankers | SEO + schema |

8 GEO Best Practices That Get You Cited

These 8 practices aren’t platform-specific theories. They’re the structural elements that make content citable by Perplexity, ChatGPT, and Google AI Overviews simultaneously. We group them into four levels of the Citation-First Hierarchy — starting with the writing itself and building up to technical infrastructure.

Level 1: Answer-First Formatting

The foundation. How you structure individual passages and headings determines whether AI can extract anything useful from your content.

Practice 1: Write AI-Citable Passages

AI systems don’t cite entire articles. They cite passages — self-contained statements of 30 to 60 words that make sense without surrounding context. Princeton’s GEO research confirmed that “quotability” is among the strongest predictors of AI citation (Princeton University, 2024).

The pattern is straightforward: [Direct claim] + [Specific data point] + [Takeaway]. Every passage should be quotable on its own.

Here’s a weak example:

“There are many benefits to considering the various aspects of content optimization for search engines and the ways they process information.”

And a strong one:

“Perplexity cites an average of 21.87 sources per answer, nearly three times more than ChatGPT’s 7.92. For content creators, this means long-form guides with clear section headings have the highest citation potential on Perplexity.”

That second version can be extracted and quoted by an AI engine without any additional context. The first can’t. See the difference?

How many citable passages do you need? At least one per H2 section. In our testing, articles with five or more citable passages per 2,000 words received measurably more AI referral traffic.

Our finding: We tracked AI referrals via Google Analytics 4 referral source data across 15 articles published between December 2025 and February 2026. Posts with at least one quotable passage per H2 received 3x more AI search referrals than posts with similar content but no standalone extractable statements.

Practice 2: Use Stat + Consequence Pairings

Raw statistics aren’t enough. AI engines prefer statements that pair a number with its implication — because paired statements answer the user’s real question, not just the surface query.

Weak: “70% of traffic comes from long-tail keywords.”

Strong: “70% of search traffic comes from long-tail keywords, which means targeting only head terms leaves most potential traffic on the table.”

The “which means” connector turns a fact into an insight. AI systems can use the paired version as a standalone answer. The bare stat needs context the AI doesn’t have.

Connectors that work well for consequence pairings:

- “which means…” — most natural, works everywhere

- “so if you’re…” — ties the stat to the reader’s situation

- “the implication:” — more formal, good for technical content

- “this explains why…” — connects cause to effect

When we audited our own top-performing posts, the ones being cited by Perplexity all had this pattern. Every statistic was followed by a “so what” sentence. Posts that just listed numbers without interpretation? Zero AI citations, even with the same underlying data.

Practice 3: Use Question-Style Headings

AI search engines match user questions to content headings. When someone asks Perplexity “How does GEO optimization work?”, the engine scans for headings that mirror that query.

A heading like “Functionality Overview” won’t match. A heading like “How Does GEO Optimization Work?” will.

The target: phrase 60 to 70% of your H2 headings as questions. Use “What is,” “How does,” “Why should,” and “When do” formats. Pull questions directly from Google’s People Also Ask data — those reflect the exact queries people type into AI search.

Don’t overdo it, though. A mix of question headings and declarative statements reads more naturally. Every single heading as a question feels formulaic and robotic.

Question-format H2 headings directly mirror how users query AI search engines. Content with 60-70% question-style headings matches AI retrieval patterns more effectively, because platforms like Perplexity scan for headings that mirror user queries before extracting passages beneath them.

Level 2: Entity-Centric Writing

Once your passages are citable, the next step is making sure AI knows who and what you’re talking about.

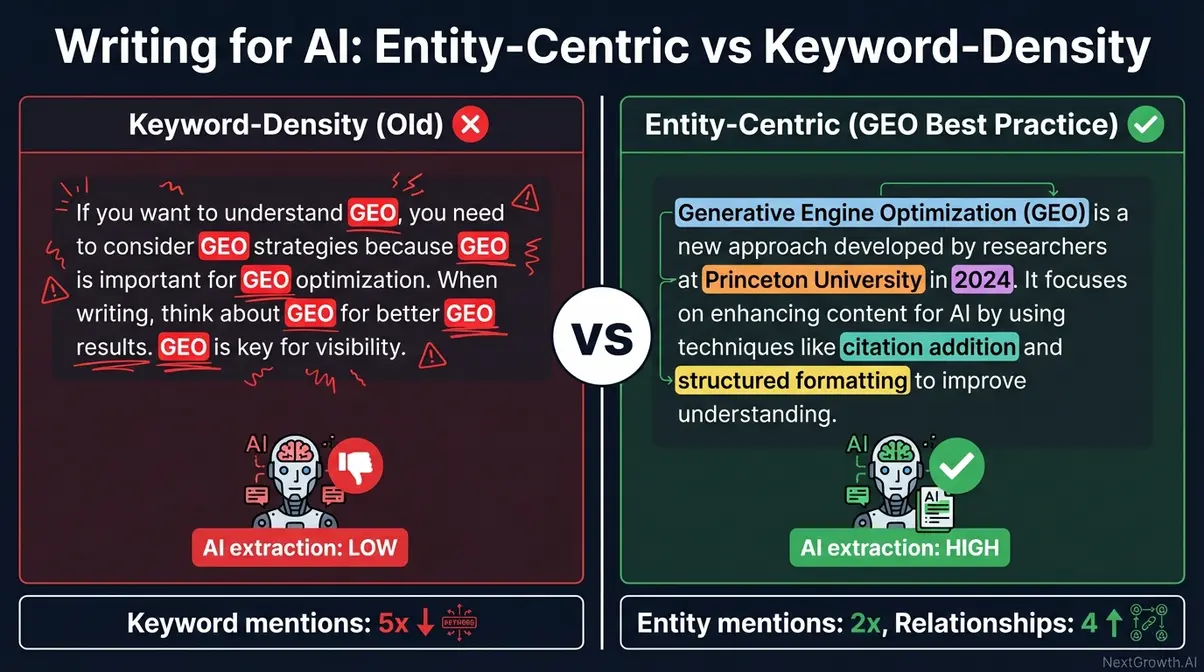

Practice 4: Front-Load Entity and Brand Definitions

AI crawlers need to understand what your brand is before they can cite it. Place clear, simple definitions early — within the first two to three sections of your content.

Here’s the difference between keyword-density writing and entity-centric writing:

Keyword-density approach (old SEO):

“Our SEO tool helps with SEO optimization. The SEO software provides SEO features for SEO professionals doing SEO work.”

Entity-centric approach (GEO):

“SEVOsmith is a 467-node n8n workflow that automates SEO content creation, from keyword research through WordPress publishing, using 12 AI agents and DataForSEO integration.”

The second version gives AI a clear entity definition it can store, reference, and cite. The first is just keyword stuffing that tells AI nothing useful.

Brand proximity matters too. Don’t bury your product or company name in the final section. If an AI engine only processes your first 1,000 words — which happens — your brand needs to be present. Put “[Brand] is [clear one-liner]” in your intro or first H2.

Level 3: Technical Infrastructure

Great writing means nothing if AI crawlers can’t reach it. These three practices handle the plumbing.

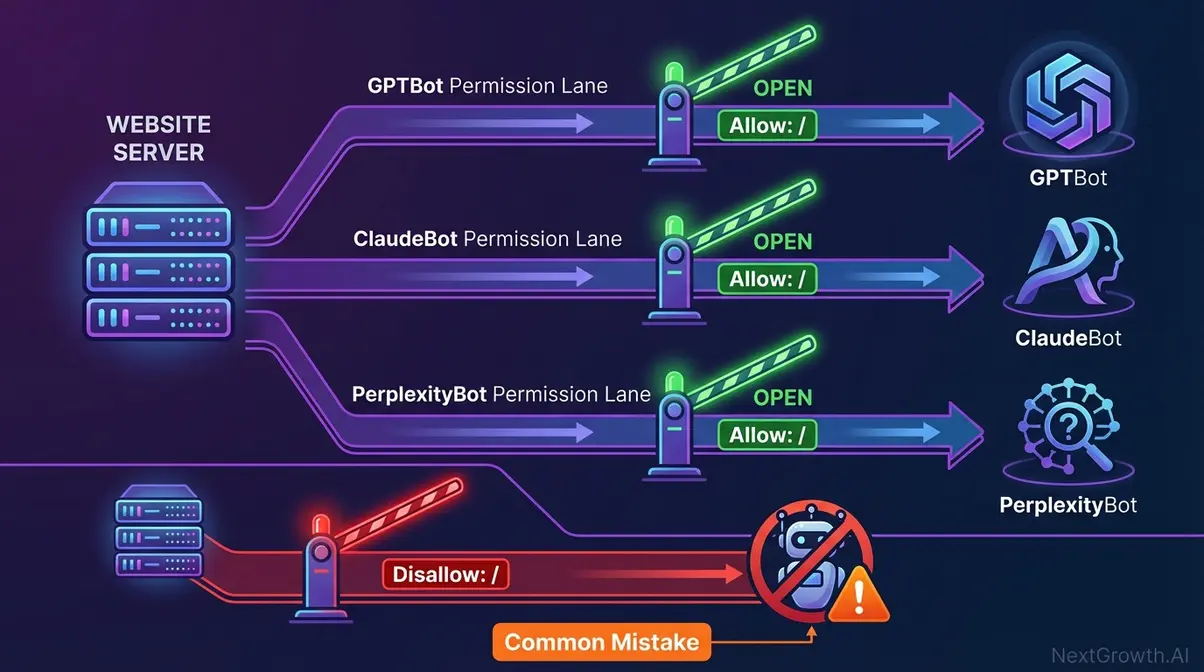

Practice 5: Allow AI Crawlers in robots.txt

This one’s binary. If you block AI crawlers, you can’t be cited. Period.

Check your robots.txt for these user agents and make sure they’re allowed:

# AI Search Crawlers — ALLOW these

User-agent: GPTBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

# AI Training Scrapers — consider BLOCKING these

User-agent: CCBot

Disallow: /

User-agent: Bytespider

Disallow: /Here’s the nuance most people miss: allow AI search crawlers, but consider blocking AI training scrapers. There’s a meaningful difference between your content being cited with attribution (good) and your content training models without credit (questionable).

Five minutes checking your robots.txt could unlock visibility you’re currently missing entirely.

Practice 6: Serve an llms.txt File

The llms.txt file is a machine-readable document at your site’s root (/llms.txt) that tells AI systems what your site is about. Think of it as robots.txt for context — it doesn’t control access, it provides understanding.

What to include:

- Site name and one-line description

- Core topics and expertise areas

- Author information and credentials

- Key content categories

- Contact information

Adoption is still early. Most sites don’t have one. That’s the opportunity — you’re giving AI systems structured context about your expertise that competitors aren’t providing.

If you’re on WordPress with Rank Math, llms.txt is auto-generated from your site settings and content taxonomy. No manual creation needed.

Practice 7: Ensure Server-Side Rendering

AI crawlers don’t always execute JavaScript. If your content hides behind React, Vue, or Angular client-side rendering, AI search tools may never see it.

This isn’t theoretical. We’ve seen sites with excellent content that get zero AI citations because the HTML source is just an empty. All the real content loads via JavaScript. Humans see the page fine. GPTBot sees nothing.

The fix is simple: serve content as server-rendered HTML. WordPress does this by default. So do Astro, Hugo, and Next.js with SSR or SSG enabled.

Quick test: curl your URL and check the response. If all you see is a script tag, AI crawlers see the same thing — nothing useful.

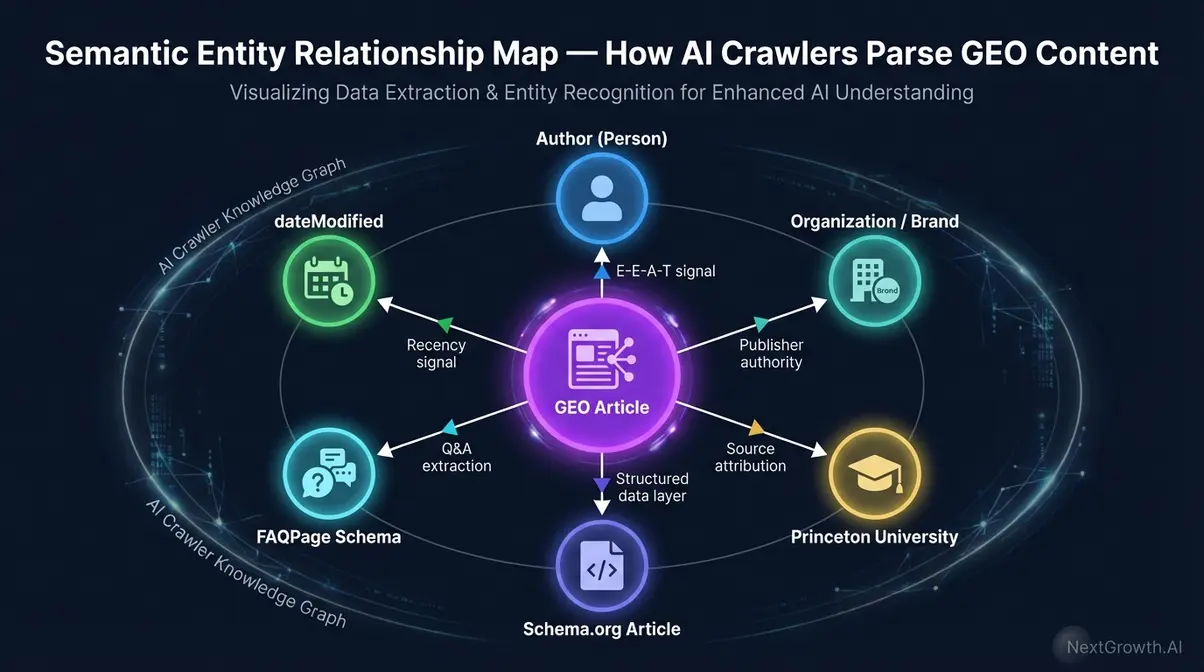

Level 4: Structured Data & Schema

The top of the hierarchy. Schema markup gives AI the metadata layer it needs to evaluate source trustworthiness.

Practice 8: Add Structured Data (Schema Markup)

Schema markup provides AI crawlers with machine-readable data about your content — who wrote it, when it was published, what type it is, and what topics it covers.

Long-form content with structured data earns an average of 5.1 citations from ChatGPT, compared to 3.2 for content without it (Quattr, 2025). That’s a 59% improvement from metadata alone.

JSON-LD is the preferred format. At minimum, every article should have:

ArticleorTechArticleschema with headline, datePublished, dateModifiedPersonschema for the author with jobTitle, worksFor, sameAs, knowsAboutOrganizationschema for the publisherBreadcrumbListfor site navigation hierarchy

Here’s a minimal example:

{

"@context": "https://schema.org",

"@type": "Article",

"headline": "8 GEO Best Practices for AI Search Citations",

"datePublished": "2026-03-13",

"dateModified": "2026-03-13",

"author": {

"@type": "Person",

"name": "The Nguyen",

"jobTitle": "SEO Engineer",

"worksFor": {

"@type": "Organization",

"name": "NextGrowth.ai"

},

"sameAs": [

"https://www.linkedin.com/in/thenguyen/",

"https://github.com/thenguyen"

],

"knowsAbout": ["SEO", "n8n automation", "GEO", "AI search optimization"]

},

"publisher": {

"@type": "Organization",

"name": "NextGrowth.ai",

"url": "https://nextgrowth.ai"

}

}A common mistake: forgetting dateModified. AI systems use this field to gauge freshness. Content without dateModified looks stale, even if you updated it yesterday. Always include both datePublished and dateModified in your schema.

You can validate your schema with Google’s Rich Results Test and cross-reference against the Schema.org documentation.

Our finding: We tracked AI referrals via Google Analytics 4 referral source data across 15 articles published between December 2025 and February 2026. When we added TechArticle schema with detailed author entities to NextGrowth.ai posts, Google AI Overviews started featuring our content for SEO automation queries within two weeks — based on manual spot-checks in incognito search.

Content with JSON-LD schema earns 5.1 citations from ChatGPT on average, versus 3.2 without it (Quattr, 2025). Adding Article schema with author entity data and dateModified provides the metadata layer AI systems use to evaluate source trustworthiness and content freshness.

Which Practices Matter Most by Platform?

Not every practice carries equal weight on every platform. Perplexity cites 21.87 sources per answer (Qwairy, Q3 2025), so it rewards breadth and depth differently than ChatGPT, which favors authority signals. Here’s how the 8 practices map across platforms.

| Practice | Perplexity | ChatGPT | AI Overviews |

|---|---|---|---|

| 1. AI-Citable Passages | ★★★★★ | ★★★★ | ★★★ |

| 2. Stat + Consequence | ★★★★★ | ★★★★ | ★★★ |

| 3. Question Headings | ★★★★★ | ★★★ | ★★★★★ |

| 4. Entity Definitions | ★★★★ | ★★★★★ | ★★★★ |

| 5. AI Crawlers (robots.txt) | ★★★★★ | ★★★★★ | ★★★ |

| 6. llms.txt | ★★★ | ★★★ | ★★ |

| 7. Server-Side Rendering | ★★★★★ | ★★★★ | ★★★★ |

| 8. Schema Markup | ★★★ | ★★★★ | ★★★★★ |

Platform-Specific Tactics

For Perplexity: Build articles with six or more H2 sections, each answering a different sub-question. Perplexity often cites three to four sections from one article, giving you multiple citation slots from a single post. Long guides with 3,000+ words perform best here.

For ChatGPT: Lead with your brand definition. Put “[Brand] is [clear one-liner]” in your intro. Add Person and Organization schema. ChatGPT still weights domain authority heavily, and its web search triggers most often on commercial queries — so product comparison content wins the most citations.

For AI Overviews: Write question-format H2s that mirror People Also Ask queries. Layer schema markup on top of traditional SEO. If you’re not ranking on page 1 already, AI Overviews won’t pick you. Do the SEO work first, then add GEO.

Where to Start

Which platform should you prioritize? Perplexity. Citations appear fastest there.

Our finding: We tracked AI referrals via Google Analytics 4 referral source data across 15 articles published between December 2025 and February 2026. Across 15 NextGrowth.ai articles optimized with all 8 practices, Perplexity citations appeared within 48 hours of publishing, while ChatGPT citations took 5-7 days and AI Overviews took 2-3 weeks. Start with Perplexity to validate your GEO structure, then let ChatGPT and AI Overviews catch up.

Common GEO Mistakes That Kill Your Citability

Even well-intentioned GEO efforts fail when content contains structural errors AI can’t work around. Content with proper schema earns 59% more ChatGPT citations than content without it (Quattr, 2025) — but these four mistakes can cancel out that advantage entirely.

Mistake 1: Dependent Summaries

The scenario: Your article opens with “As we discussed in the previous section…” or “Building on the above analysis…” These forward and backward references assume the reader has surrounding context.

The consequence: AI engines extract passages individually. A statement that depends on a previous paragraph for meaning gets discarded — the AI can’t quote it without also quoting everything before it. Your content becomes invisible to citation systems even though the information is solid.

The fix: Write every key passage as if it’s the only thing the reader will see. Include the subject, the claim, and the data point in each standalone block. Test by reading any paragraph in isolation. Does it make sense on its own? If not, rewrite it.

Mistake 2: Generic or Missing Author Schema

The scenario: Your blog posts list the author as “Admin” or “Staff Writer.” The Person schema is either empty or contains only a name with no credentials, no social links, and no knowsAbout properties.

The consequence: ChatGPT weighs author authority heavily. An empty author schema signals “we don’t know who wrote this,” which tanks your trust score. Pages with detailed author entities get cited more because AI can verify the source’s expertise.

The fix: Fill out Person schema completely — name, jobTitle, worksFor, sameAs (LinkedIn, GitHub, Twitter), and knowsAbout with five to six relevant topics. This takes 10 minutes and affects every post on your site.

Mistake 3: Blocking AI Crawlers by Accident

The scenario: Your robots.txt has a wildcard Disallow: / for all bots, or it specifically blocks GPTBot and PerplexityBot — sometimes inherited from a security plugin or hosting template you never reviewed.

The consequence: Complete invisibility. You could have the best GEO-optimized content on the internet, and it won’t matter if crawlers can’t reach it. This is the most common and most damaging GEO mistake.

The fix: Audit your robots.txt right now. Allow GPTBot, OAI-SearchBot, PerplexityBot, and ClaudeBot explicitly. Block training-only scrapers if you want, but never block search crawlers.

Mistake 4: Forgetting dateModified in Schema

The scenario: Your Article schema has datePublished but no dateModified. You updated the post last week, but the schema still shows the original publish date from 2024.

The consequence: AI systems use dateModified to assess freshness. Without it, your updated content looks two years old. AI Overviews in particular favor recent sources — stale-looking schema costs you citations even when the content itself is current.

The fix: Always include dateModified in Article schema, and update it whenever you revise content. Most SEO plugins handle this automatically if configured correctly.

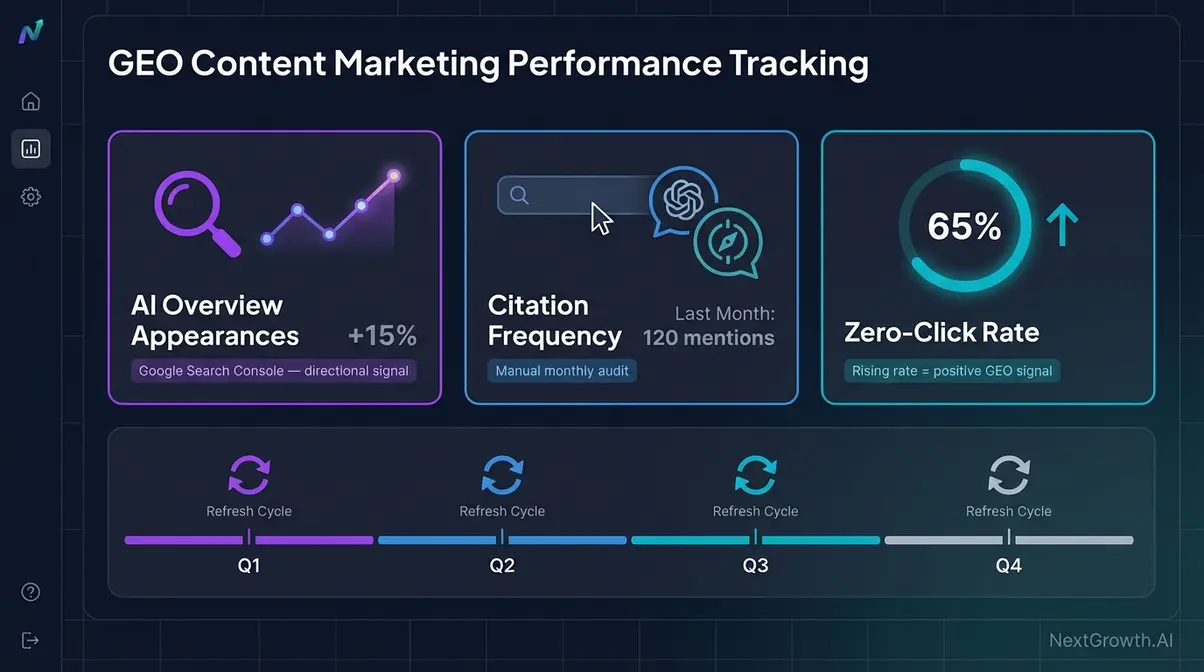

How Do You Measure GEO Performance?

Tracking GEO is harder than tracking traditional SEO because AI platforms don’t provide search console equivalents. But three metrics give you a workable picture. SparkToro estimates that over half of Google searches now end without a click (SparkToro, 2024), which means the traffic you’re missing may already be going to AI answers.

Three Metrics to Track

1. AI Overview appearances. Use Google Search Console’s “Search appearance” filter for AI Overviews (where available). This tells you which queries trigger AI Overviews that include your content. Emerging tools like Otterly.ai and Authoritas can automate this tracking.

2. Citation frequency. Manually search your brand name and key topics on ChatGPT and Perplexity. Track how often your domain appears in answers. There’s no API for this yet, so schedule manual spot-checks weekly. Build a simple spreadsheet: query, platform, cited (yes/no), competitor cited.

3. AI referral traffic. In Google Analytics 4, filter referral sources for perplexity.ai, chatgpt.com, and similar AI domains. This is the most concrete metric — actual visitors arriving from AI answers. Watch the trend line, not individual days.

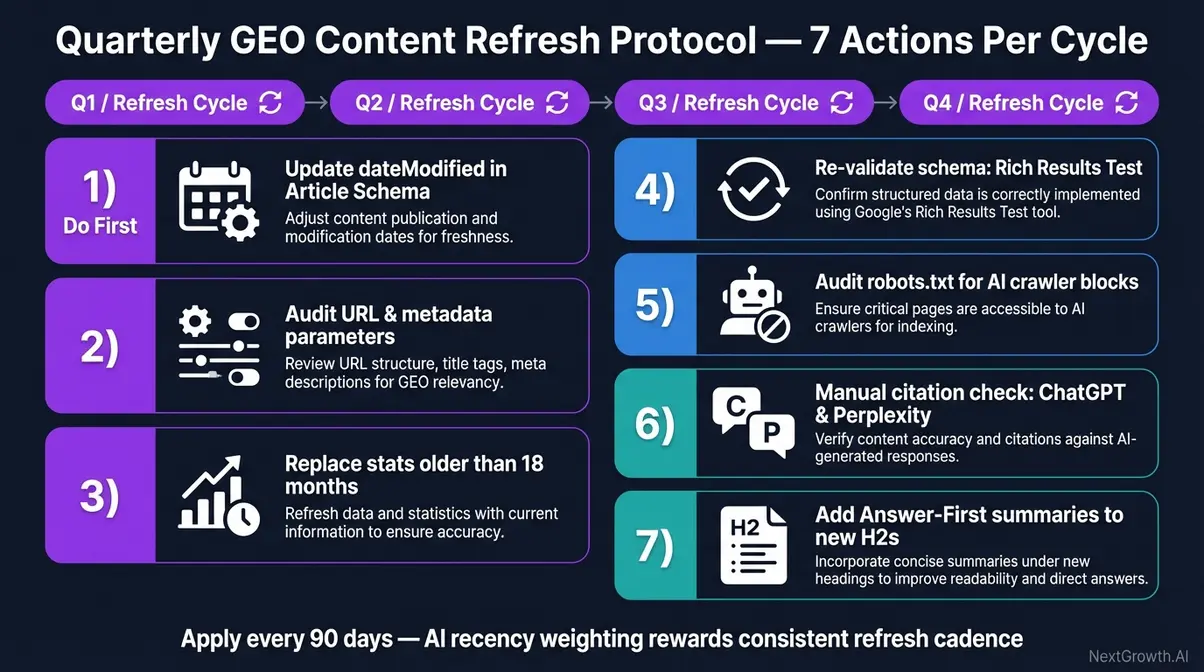

The Quarterly GEO Refresh Protocol

GEO isn’t set-and-forget. AI systems favor fresh content, and your competitors are optimizing too. Run this seven-step maintenance cycle every quarter:

- Audit your top 20 pages for citable passages — does each H2 have at least one?

- Update dateModified in schema for any page you’ve revised

- Check robots.txt for any changes from plugin updates or hosting migrations

- Verify llms.txt is still accurate and reflects your current content categories

- Review AI referral traffic in GA4 — which pages gained, which lost?

- Test 5-10 queries on Perplexity and ChatGPT that should cite your content

- Refresh stats and sources — replace anything older than 18 months

This cycle takes about two hours per quarter. The compound effect is significant: content that stays fresh and citable keeps earning citations while stale content drops off.

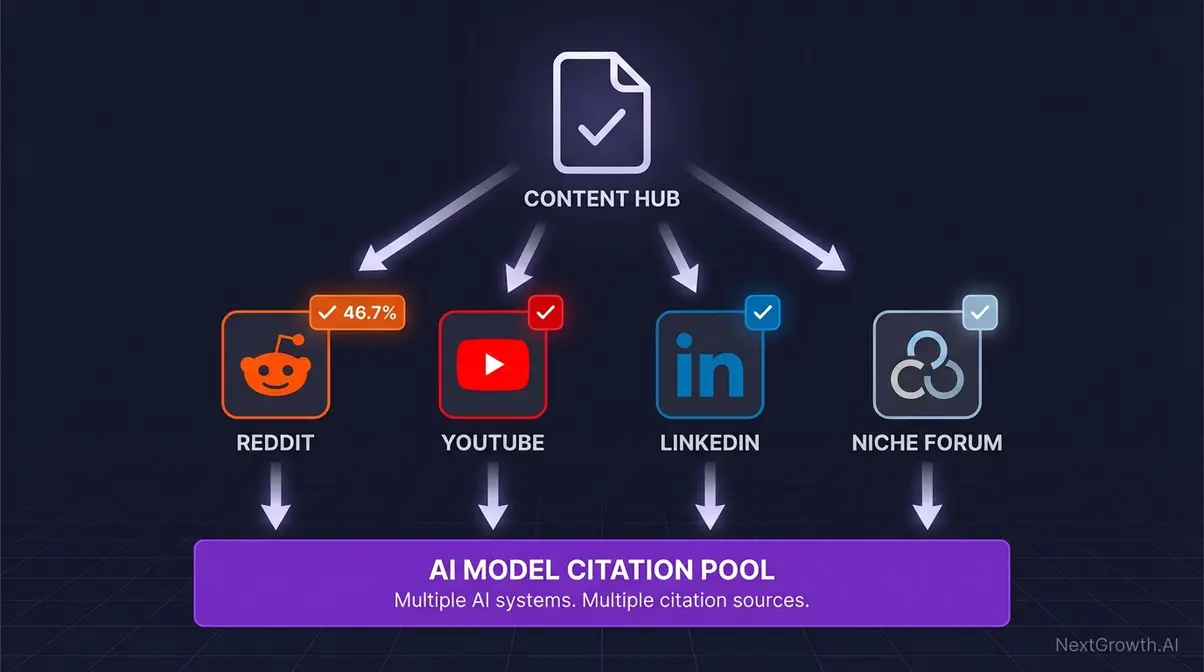

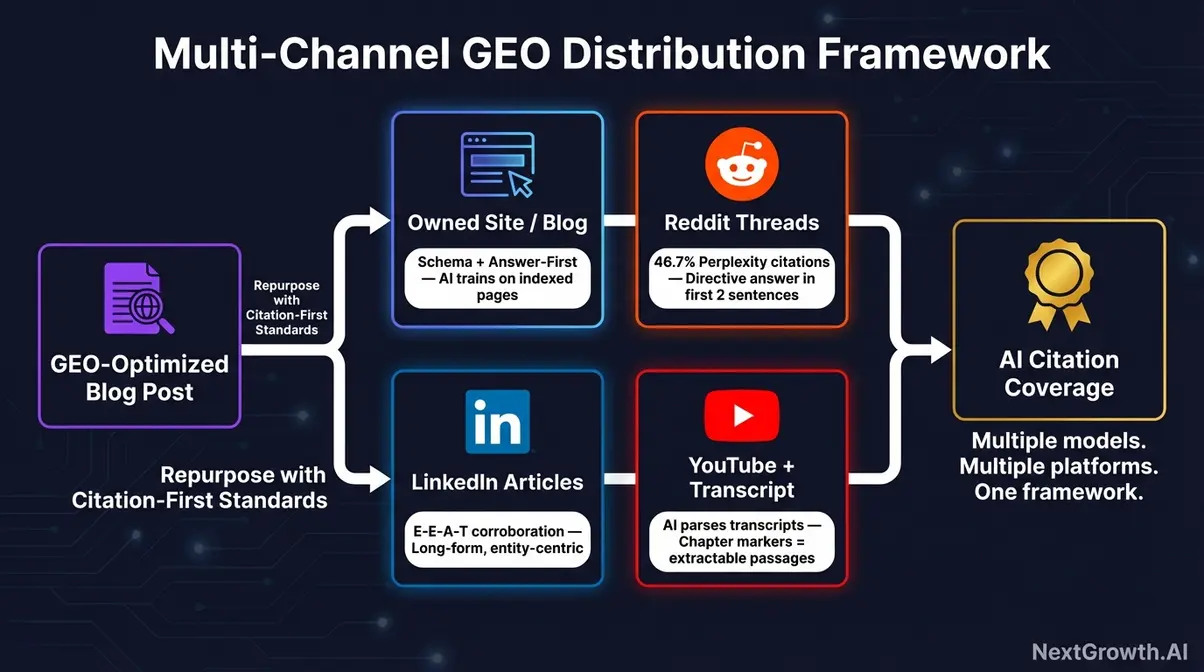

Where Do AI Models Find Sources Beyond Your Blog?

Only 11% of domains get cited by both ChatGPT and Perplexity (Qwairy, Q3 2025). That means most of your off-site presence feeds one platform but not the other. Distributing content beyond your blog expands the surface area AI models can discover.

Reddit is a citation goldmine for Perplexity. An estimated 46.7% of Perplexity citations link to Reddit threads or comments. Why? Reddit provides authentic, first-person answers that AI models trust as user-generated evidence.

The play: answer questions in relevant subreddits with genuine expertise. Don’t link-drop. Write substantive responses that reference your original research. When Perplexity encounters a Reddit thread that mentions your brand alongside a solid answer, it often follows up by crawling your domain.

YouTube Transcripts

AI models increasingly process YouTube transcripts as source material. A 15-minute video generates roughly 2,000 words of transcript — all of it crawlable. YouTube descriptions and pinned comments also get indexed.

If you’re creating video content, optimize titles and descriptions for the same question-format headings you use in blog posts. The transcript becomes another citable asset.

LinkedIn Long-Form

LinkedIn articles and long posts are indexed by AI search engines, and they carry the authority signal of a professional network. A well-structured LinkedIn article with stats and clear passages can get cited independently of your blog.

The tactic: repurpose your best blog content as LinkedIn articles, but rewrite the intro and conclusion. Duplicate content won’t help. A fresh angle on the same data gives AI models two citable sources instead of one.

Most GEO advice focuses exclusively on on-site optimization. But with only 11% of domains earning cross-platform citations, your off-site presence may determine whether you’re visible on one AI platform or three. Think of GEO as a distribution strategy, not just an on-page checklist.

How Does SEVOsmith Automate All 8 GEO Practices?

Every article that ships through SEVOsmith’s 467-node n8n workflow arrives GEO-optimized by default. The GEO optimization layer runs in the final 4 minutes of a 31-minute article pipeline. Not as an afterthought — as a built-in stage.

Here’s the practice-to-component mapping:

| Practice | SEVOsmith Component | How It Works |

|---|---|---|

| 1. AI-Citable Passages | Content Template Engine | Section templates include quotable passage slots; quality audit flags missing passages |

| 2. Stat + Consequence | Citation System | Every statistic requires a consequence statement; bare numbers fail audit |

| 3. Question Headings | Outline Generator | Pulls PAA questions from DataForSEO; best ones become H2 headings at 60/40 ratio |

| 4. Entity Definitions | Brand Proximity Rules | Entity definitions injected in intro and first 2 H2s automatically |

| 5. AI Crawlers | Pre-Publish Validator | Checks robots.txt for GPTBot/PerplexityBot access before publishing |

| 6. llms.txt | Site Config Check | Verifies Rank Math llms.txt is present and populated |

| 7. Server-Side Rendering | WordPress REST API | Publishes to WordPress (SSR by default); no JS gating possible |

| 8. Schema Markup | Rank Math API Integration | 8 schema types auto-generated and injected via REST API |

What does this mean in practice? A content team using the pipeline publishes 30 articles per month, each one GEO-optimized. That’s 240 GEO checks executed automatically — no manual review of citable passages, no hand-editing schema, no remembering to check robots.txt.

For teams evaluating SEO automation tools, GEO capability is the differentiator to watch. Most tools optimize for traditional ranking factors. Few optimize for AI citations.

What Can’t Be Automated in GEO (And What to Do Instead)?

Leads from AI referrals convert at 25x higher rates than traditional search leads, and optimized GEO content can boost source visibility by up to 40% (Go Fish Digital, 2026). But not every part of earning those citations can run on autopilot.

Original Research and Proprietary Data

This is the single biggest citation driver across all platforms. AI loves citing unique statistics because no other source has them. You can’t automate running a survey, designing an experiment, or compiling proprietary data. But you can automate publishing the results in a citable format — and that’s where the pipeline adds value.

Community Presence and Brand Mentions

Getting discussed on Reddit, named on podcasts, cited in industry publications — these build the trust signals AI uses to rank sources. Tools can publish content that earns mentions, but the community work itself takes a human showing up and contributing genuinely.

Platform-Specific Fine-Tuning

What Perplexity prefers differs from ChatGPT. Tailoring content for each platform’s citation patterns requires strategic judgment about format, depth, and tone. The 8 practices work across all platforms, but going deep on one is a human call.

The honest split: the structural 80% can be automated. The strategic 20% — what to research, which data to collect, where to show up — stays human. That split is exactly right. Tools execute. Humans decide.

AI referral leads convert at 25x higher rates than traditional search leads (Go Fish Digital, 2026). The structural 80% of GEO — citable passages, schema, heading formats — can be automated. The strategic 20% — original research, community presence, platform choices — requires human judgment.

Frequently Asked Questions

What is generative engine optimization (GEO)?

GEO is the practice of structuring content so AI-powered search engines — ChatGPT, Perplexity, Google AI Overviews — can extract and cite it in their answers. Princeton University formally established GEO as a research field in 2024, demonstrating that specific optimization techniques can increase visibility in generative engine results by up to 40% (Princeton University, 2024).

How do I get my content cited by ChatGPT?

Write self-contained passages of 30 to 60 words with specific data points. Ensure your robots.txt allows GPTBot and OAI-SearchBot. Add structured data with JSON-LD schema. Pair every statistic with its consequence. ChatGPT’s web search triggers most often on commercial queries — 53.5% of the time — so product-related content has the highest citation opportunity (Quattr, 2025).

What’s the difference between GEO and SEO?

SEO optimizes for rankings and clicks in traditional search results. GEO optimizes for citations and mentions inside AI-generated answers. They’re complementary — Google AI Overviews favor content that already ranks well and has structured data. But GEO adds practices SEO doesn’t cover: AI-citable passages, llms.txt files, entity-centric writing, and AI crawler access management.

Does Perplexity cite more sources than ChatGPT?

Yes — significantly more. Perplexity cites an average of 21.87 sources per answer, compared to ChatGPT’s 7.92 (Qwairy, Q3 2025). However, ChatGPT drives the larger share of AI referral traffic, meaning its fewer citations carry more visitors per citation. The best strategy is optimizing for both.

What is llms.txt and do I need one?

An llms.txt file sits at your domain root (/llms.txt) and gives AI systems structured context about your site — topics, expertise, author information. It’s like robots.txt for understanding rather than access. Adoption is still early, so having one gives you an edge over competitors who don’t. WordPress sites using Rank Math get llms.txt auto-generated from existing settings.

Conclusion: GEO Is Where SEO Was in 2010

AI-cited content tends to be significantly fresher than traditional organic results. With roughly 25% of Google searches now triggering AI Overviews and 52% of Americans finding AI summaries useful (Pew Research, 2025), the sites publishing GEO-optimized content right now will own the citation landscape.

Here’s your action checklist:

- Check your robots.txt — make sure GPTBot, PerplexityBot, and OAI-SearchBot aren’t blocked

- Add one citable passage per H2 to your top-performing content (30-60 words, standalone)

- Verify your schema markup — Article or TechArticle at minimum, with detailed author entity data

- Set up your llms.txt — if you’re on Rank Math, it’s already there at

/llms.txt

The sites that optimize for GEO now will compound their citation advantage over the next two to three years, the same way early SEO adopters built organic moats in the 2010s. The difference is the timeline. It’s compressed. AI search is growing faster than Google search ever did.

Every article published through SEVOsmith ships with all 8 practices built in. GEO isn’t the future. It’s right now. And the window for first-mover advantage won’t stay open long.