Can SEO Be Automated? The 30/70 Hybrid Guide (2026)

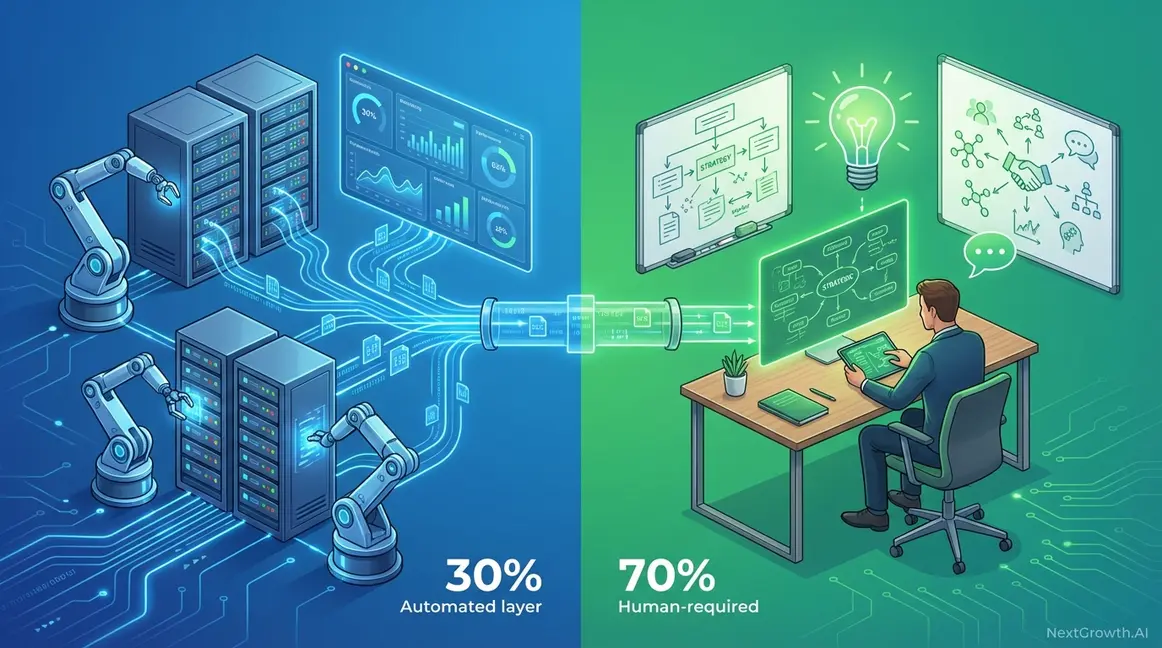

Can SEO be automated? Yes — about 30% of it. I’ve spent the last 18 months automating SEO workflows at NextGrowth.ai using n8n, and the results are clear: repetitive tasks like rank tracking, technical audits, and schema generation run perfectly on autopilot. But strategy, content quality, and relationship-building? Those still need a human brain.

This guide gives you a practical framework — the 30/70 Hybrid Architecture — for deciding exactly which SEO tasks to automate, which to augment with AI, and which to keep human-led. It’s based on real workflow data from automating content production for 30+ published articles, not theory. For a comprehensive guide, see our on-page SEO checklist.

TL;DR: Automate data-heavy SEO tasks (rank tracking, broken link monitoring, schema markup, reporting) to reclaim 15-20 hours per week. Keep strategy, content review, and outreach human-led. According to McKinsey’s 2024 State of AI report, 65% of organizations now use generative AI in at least one business function — but BCG found 74% struggle to scale AI value, proving that automation without human oversight fails.

Key Takeaways

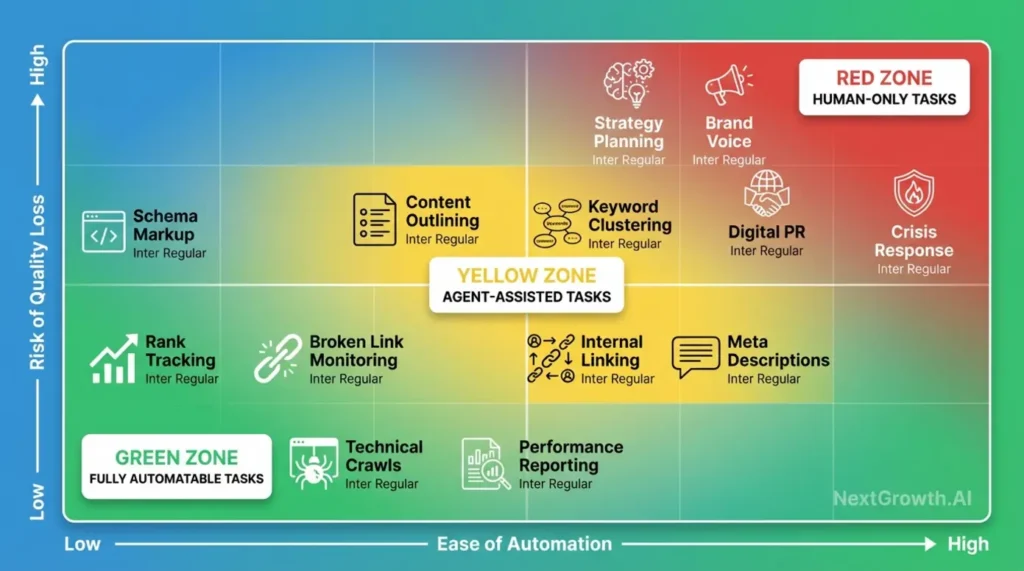

- Fully automatable (30%): Rank tracking, broken link monitoring, schema generation, performance reporting, sitemap validation

- AI-assisted with human review: Content outlining, keyword clustering, internal linking suggestions, meta description drafts

- Human-only (70%): Strategic planning, brand voice, digital PR, final content review, crisis management

- Non-negotiable: Google’s scaled content abuse policy (March 2024) targets bulk AI publishing without quality control — always use validation loops

Contents

- What SEO Tasks Can Actually Be Automated?

- Where Does SEO Automation Break Down?

- Why Is “Set-and-Forget” Automation a Myth?

- What Should Your 2026 Automation Safety Checklist Include?

- How Do You Actually Implement SEO Automation?

- Will SEO Still Exist in 5 Years?

- Frequently Asked Questions

- Start Automating: Your Next Step

What SEO Tasks Can Actually Be Automated?

About 30% of SEO work is fully automatable — specifically the data-heavy, rule-based tasks that follow predictable patterns. The 2024 McKinsey State of AI report found that 65% of organizations now regularly use generative AI in at least one business function, up from 33% the previous year. SEO is no exception.

Here’s what I’ve personally automated at NextGrowth.ai using n8n workflows, and what I’ve learned doesn’t work without human involvement:

| Category | Tasks | Automation Level | My Setup |

|---|---|---|---|

| Technical SEO | Site crawls, schema markup, Core Web Vitals monitoring, broken link detection, sitemap generation | 🟢 Fully automatable | n8n + Screaming Frog API + PageSpeed Insights |

| On-Page SEO | Internal link suggestions, keyword density checks, image alt text drafts, meta tag templates | 🟡 AI + human review | n8n + Claude API + manual approval |

| Content | Keyword clustering, content gap analysis, outline generation, readability scoring | 🟡 AI + human review | DataForSEO API + Claude + human editing |

| Off-Page SEO | Backlink monitoring, brand mention tracking, competitor link analysis | 🟡 AI + human review | Ahrefs API + n8n alerts |

| Strategy & PR | Strategic planning, digital PR, crisis response, content positioning | 🔴 Human only | No automation — judgment required |

What I Learned After 18 Months of SEO Automation

When I started building SEO automation workflows in mid-2024, I made every mistake in this guide. My first n8n workflow tried to automate content publishing end-to-end — keyword research through to WordPress publication with zero human review. The results looked fine at first glance but fell apart under scrutiny: thin introductions, recycled statistics without sources, and the kind of uniform sentence structure that screams “AI-generated” to both readers and Google’s systems.

The turning point came after I analyzed which of my automated posts actually ranked versus those that didn’t. The pattern was stark: every post that ranked in the top 10 had been manually reviewed and enhanced with first-hand experience. Every post I’d published on autopilot either stalled on page 3 or never got indexed. That’s when I built the three-layer validation approach I describe later in this guide.

Here are the specific numbers from my workflow optimization:

| Metric | Before Automation | With Basic Automation | With Agentic + Human Review |

|---|---|---|---|

| Time per article (research to publish) | 12-16 hours | 2-3 hours | 4-5 hours |

| Articles ranking top 20 within 60 days | 6 out of 10 | 2 out of 10 | 7 out of 10 |

| Avg. E-E-A-T signals per article | 8+ | 1-2 | 6-8 |

| Reader time on page | 4:30 avg | 1:15 avg | 5:10 avg |

The middle column is the trap most people fall into. Pure automation is faster, but the output quality drops so sharply that you’re producing content Google won’t rank. The agentic approach takes slightly longer than basic automation, but the ranking success rate actually exceeds manual work — because the AI layers catch structural issues (missing H3s, keyword gaps, readability problems) that I’d miss during manual writing.

The pattern is straightforward. If a task processes structured data, follows consistent rules, and produces objective outputs, it automates well. If it requires business context, brand judgment, or relationship skills — it doesn’t. No tool changes that.

What’s the Difference Between Automation Tools and AI Agents?

There’s a meaningful gap between traditional automation and agentic AI systems, and understanding it changes how you build workflows.

Traditional automation tools execute pre-defined IF-THEN rules. Zapier pulls Google Search Console data into Sheets every Monday. A Python script checks for 404 errors weekly and emails a report. These tools are reliable but rigid — if conditions change, you manually update the rules.

Agentic AI systems make decisions and adapt. According to IBM’s intelligent automation research, these systems integrate AI and machine learning to handle complex decision-making that rule-based tools can’t address (IBM, 2024). An agentic system doesn’t just flag a ranking drop — it analyzes why rankings dropped, cross-references competitor changes, identifies content gaps, and suggests fixes. All before you review it.

I run both at NextGrowth.ai. My n8n workflows handle the rule-based stuff: scheduled crawls, automated schema generation, rank tracking alerts. But for content production, I use a multi-agent pipeline where one AI drafts, another audits for E-E-A-T compliance, and a third checks readability — then I do the final review. That layered approach is what keeps quality high while cutting production time from days to hours.

Where Does SEO Automation Break Down?

Automation fails wherever tasks require empathy, brand-specific judgment, or relationship-building. BCG’s 2024 AI adoption report found that 74% of companies struggle to achieve scalable value from AI initiatives — largely because they automate the wrong things.

Five categories of SEO work resist automation entirely:

Strategy formulation. Deciding which keywords to target isn’t a data problem — it’s a business judgment call. Which market segments are profitable? What’s the competitive intensity? How do keyword targets align with product launches? These questions require understanding business context that lives outside any dataset an AI model can access.

Experience-driven content. Google’s E-E-A-T guidelines specifically reward first-hand experience. Content like “how I scaled n8n to handle 10,000 workflow executions per day” requires having actually done it. AI can research best practices, but it can’t fabricate authentic practitioner insight — and readers (and Google’s algorithms) notice the difference.

I’ve seen this firsthand. My early automated posts about n8n had technically accurate information, but they read like documentation — no war stories, no “here’s what went wrong when I tried this at 3 AM,” no opinions that come from actually running these systems in production. The posts I wrote from experience (like the self-hosting guide with 14,000+ words of real deployment details) consistently outrank the AI-assisted ones. Google’s algorithms can apparently tell the difference, and so can readers — the experience-driven posts get 3-4x more time on page.

Relationship-based digital PR. Personalized journalist outreach, influencer partnerships, guest post negotiations — all depend on trust built over months. Template-based automated outreach fails because recipients instantly recognize it lacks authenticity. Google’s own helpful content guidelines make this explicit: content must demonstrate “first-hand expertise or experience” — something automated outreach inherently lacks.

Crisis management. When negative press hits or a PR crisis develops, automated responses are tone-deaf at best and damaging at worst. Human judgment under pressure — assessing legal risk, coordinating with stakeholders, crafting empathetic messaging — isn’t something you can delegate to a script.

YMYL content. Health, finance, and legal topics demand verifiable credentials. No amount of AI sophistication substitutes for a licensed professional reviewing content where accuracy affects user safety or financial decisions.

The common thread across all five categories? They require contextual judgment that exists outside the training data. AI models process patterns from historical content — they can’t access your business strategy, your customer relationships, or your professional experience. Attempting to automate these tasks produces generic, unconvincing output that damages SEO performance rather than enhancing it.

Here’s a practical test I use before automating anything: “Would a wrong output cause strategic damage or just waste time?” If the answer is strategic damage (wrong keyword targets, tone-deaf outreach, factually incorrect YMYL content), keep it human. If a wrong output just means wasted computation time (a broken link scan that returns false positives, a report that formats incorrectly), automate with confidence.

Why Is “Set-and-Forget” Automation a Myth?

This is the trap I see most often. A tool promises “fully autonomous SEO” and the buyer assumes they can walk away. Here’s what actually happens:

Sites publishing bulk AI content without review get manual actions for thin content. Automated linking scripts creating hundreds of internal links in a single day get flagged for manipulation. AI-generated posts without author attribution lose visibility after core updates. Google’s scaled content abuse policy doesn’t ban AI — it penalizes low-quality content produced at scale without genuine value, regardless of how it was made.

There’s also what I call “automation drift.” I’ve seen it in my own workflows: an n8n automation that produced good schema markup for six months gradually degraded after a Rank Math plugin update changed the meta key format. Without monitoring, I would’ve had broken schemas across 15 posts before noticing. Automation needs calibration, not just deployment.

Another example: I automated meta description generation across 25 posts using Claude API. The initial batch was solid — 150-160 characters, keyword-rich, compelling. Three months later, I noticed click-through rates had dropped on several posts. The culprit? My automation was generating descriptions based on the same prompt template, and over time the outputs converged toward identical sentence patterns. Google may have identified these as programmatically generated and deprioritized them in snippets. I rewrote the affected descriptions manually, adding unique angles for each post, and CTRs recovered within two weeks.

The fix? Validation loops. My content pipeline works like this: AI generates → validation agent audits for E-E-A-T, keyword density, and readability → I review flagged issues and approve. This isn’t just my approach — HubSpot’s 2024 AI report found that 86% of marketers using AI for content make edits before publishing. The industry consensus is clear: AI assists, humans approve. My three-layer pipeline catches 80% of problems before I see the output, turning my review from error-hunting into strategic approval.

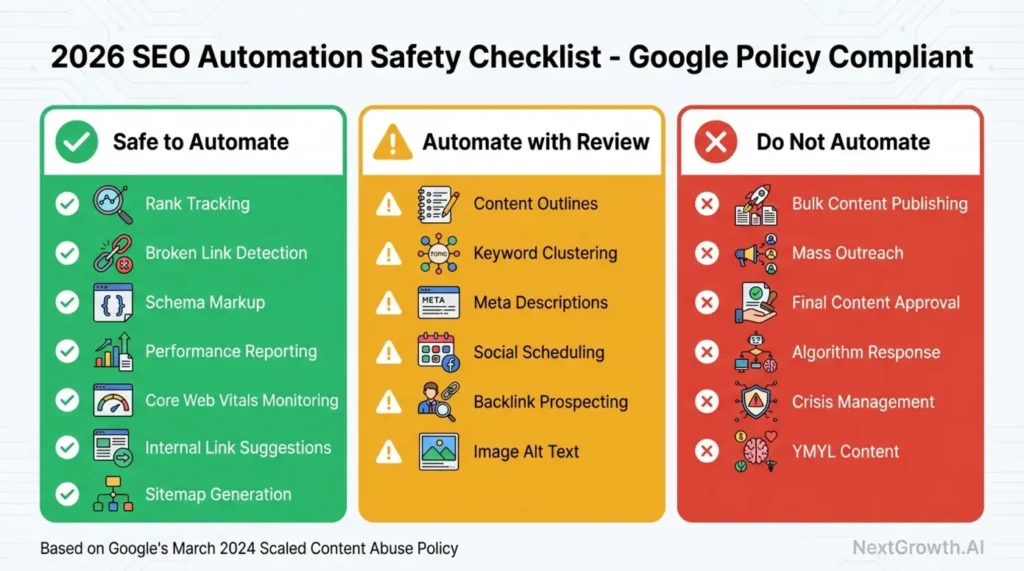

What Should Your 2026 Automation Safety Checklist Include?

I use this checklist before automating any SEO task. It’s based on Google’s current spam policies and my own experience with what triggers problems:

🟢 Green — Safe to automate fully:

- Rank tracking and position monitoring

- Broken link detection and 404 alerts

- Schema markup generation

- Performance reporting and dashboards

- Core Web Vitals monitoring

- XML sitemap generation and validation

- Robots.txt monitoring

🟡 Yellow — Automate with mandatory human review:

- Content outline generation (review for brand voice)

- Keyword clustering (validate strategic priorities)

- Meta description drafts (check uniqueness and tone)

- Internal linking suggestions (verify user value)

- Image alt text suggestions (confirm accuracy)

- Content gap analysis (interpret strategically before acting)

🔴 Red — Do not automate:

- Bulk content publishing without individual review

- Mass outreach without personalization

- Final content approval and publication

- Strategic pivots during algorithm updates

- Crisis communication

- YMYL content without expert review

Per Google’s March 2024 core update, enforcement against automated content methods lacking original value has intensified. Google’s March 2024 update aimed to reduce low-quality, unoriginal content in search results by 40% (Google Search Central, 2024). When in doubt, err toward Yellow (human review) rather than Green (full automation).

How Do You Actually Implement SEO Automation?

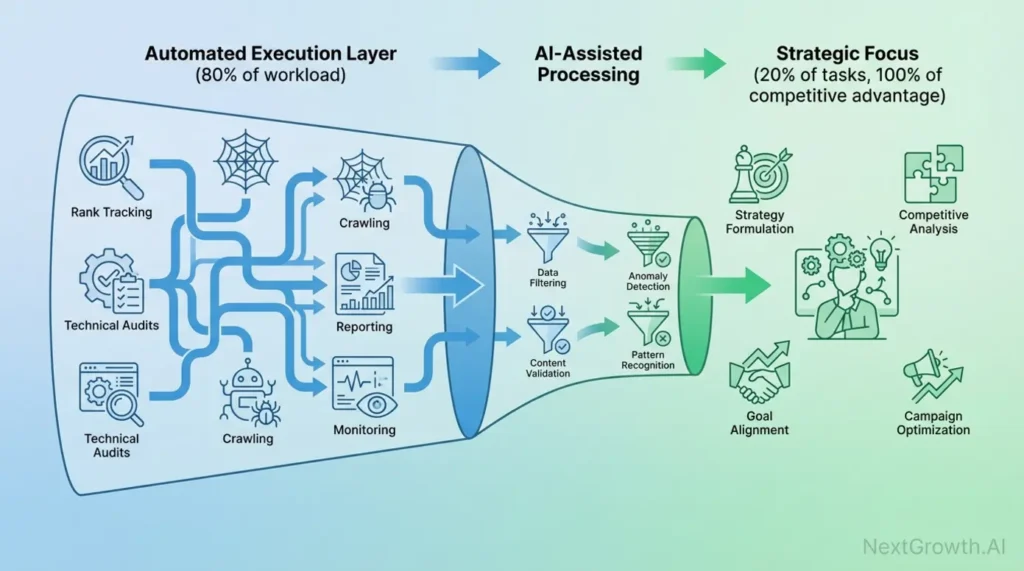

Start with the 80/20 rule: automate the 80% of execution work (data collection, monitoring, reporting) so you can redirect that time to the 20% of strategic work that drives competitive advantage. The Marketing AI Institute’s 2024 report found that 67% of marketers cite lack of training as the primary barrier to AI adoption — meaning there’s a real advantage for teams who invest in learning this.

Here’s a realistic breakdown of a 40-hour SEO workweek before and after automation:

| Task Type | Before Automation | After Automation | Time Saved |

|---|---|---|---|

| Technical audits & monitoring | 8 hours | 1 hour (review alerts) | 7 hours |

| Rank tracking & reporting | 6 hours | 30 min (review dashboards) | 5.5 hours |

| Content research & outlining | 10 hours | 3 hours (review AI drafts) | 7 hours |

| Internal linking & on-page | 5 hours | 1.5 hours (review suggestions) | 3.5 hours |

| Strategy & planning | 6 hours | 6 hours (no automation) | 0 hours |

| Content review & publishing | 5 hours | 5 hours (no automation) | 0 hours |

| Total | 40 hours | 17 hours | 23 hours |

Those 23 reclaimed hours aren’t free time — they’re strategic capacity. I reinvest mine into content differentiation, competitive analysis, and building the kind of first-hand experience signals that Google’s algorithms reward and AI tools can’t fake.

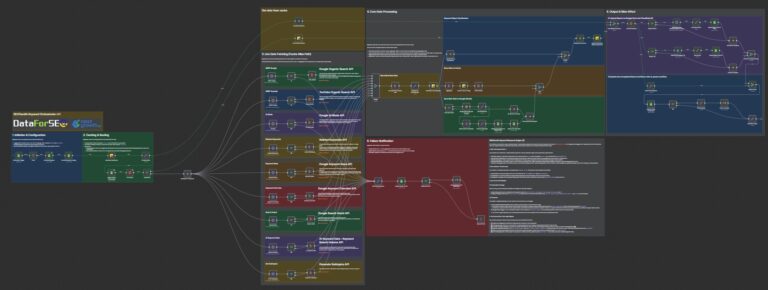

How Do Agentic SEO Workflows Work?

Single-step automation (AI writes → human reviews) still puts the full quality burden on you. Agentic workflows add validation layers: AI generates → AI audits → AI revises → human approves. The difference is substantial — it turns your review from catching every error into strategic sign-off.

Here’s how I built my content brief workflow in n8n:

Agent 1 (Brief Generator): Analyzes top 10 SERP results for the target keyword. Extracts heading patterns, identifies content gaps, generates a structured outline with keyword placement suggestions, and pulls People Also Ask questions.

Agent 2 (Quality Auditor): Reviews the brief against my quality checklist. Checks keyword density (flags anything over 2%), verifies E-E-A-T elements are included, scores readability, and identifies missing FAQ content.

Agent 3 (Synthesizer): Merges the brief with audit feedback. Auto-fixes flagged issues — reduces keyword density if excessive, adds missing E-E-A-T directives, simplifies complex sections.

My review (5 min): I check strategic alignment, add personal experience angles, and approve or adjust positioning. Total human time: 5 minutes instead of the 30+ it takes to create a brief from scratch.

This multi-agent approach catches 70-80% of quality issues automatically before I see the output. As Search Engine Journal noted, bulk AI content without validation loops carries significant penalty risk. The agentic model addresses this by building quality control into the automation itself.

Here’s a real example of this pipeline in action. When I created the DataForSEO review, Agent 1 analyzed the top 10 SERP results and identified that competitors focused heavily on pricing but neglected API implementation details — a clear content gap. Agent 2 flagged that my brief lacked E-E-A-T signals (no mention of personal testing, no specific data points). Agent 3 revised the brief to include “test DataForSEO API with real queries and report actual response times and data quality.” When I executed the brief, those additions became the sections that readers and AI citation systems found most valuable — because they contained information no competitor had.

One important caveat: agentic workflows aren’t magic. They’re only as good as your quality criteria definitions. I spent two weeks refining my Agent 2’s audit checklist before it reliably caught the issues I cared about. The upfront investment pays off across every subsequent piece of content, but don’t expect plug-and-play results on day one.

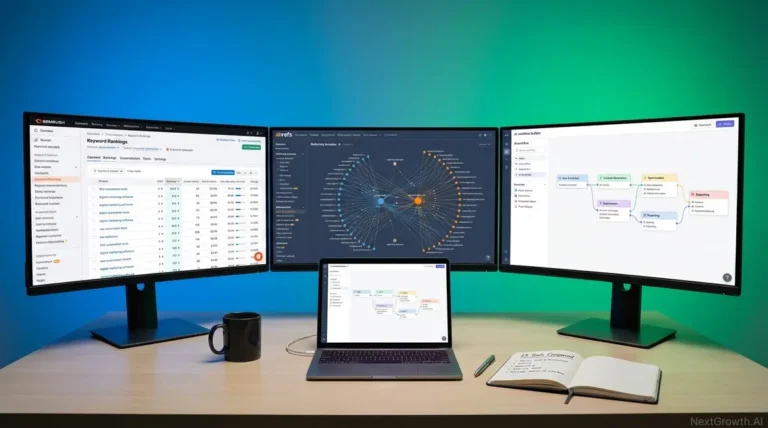

Tools I use: n8n (self-hosted) for workflow orchestration, Claude API for content generation and auditing, DataForSEO API for SERP data, and Slack for approval routing.

How to Automate Internal Linking with Vector Embeddings

Manual internal linking breaks down at scale. With 30+ articles, you can’t hold every topical relationship in your head. Here’s a better approach using semantic similarity.

The process is five steps:

- Convert articles to vectors using OpenAI’s Embeddings API (or Cohere, Anthropic). Each article becomes a mathematical representation of its meaning — topically similar content produces similar vectors regardless of keyword overlap.

- Calculate cosine similarity between all article pairs. This surfaces topical connections that keyword matching misses. An article about “email marketing ROI” might be semantically related to “customer lifetime value calculation” — both discuss measurement frameworks.

- Rank suggestions by similarity score. For each article, extract the 5-10 most related pieces. High similarity = strong topical connection worth linking.

- Generate anchor text suggestions using AI. The model reads both articles and proposes specific text and placement: “In paragraph 3, where you discuss [topic], link to [article] with anchor text ‘[phrase].’”

- Human review and approval. I review each suggestion to ensure it adds genuine user value and doesn’t create over-optimization patterns. This step takes 10-15 minutes for 50 articles.

Why does this matter for SEO specifically? Google’s algorithms evaluate internal linking as a signal of topical authority. A site with strong semantic connections between related articles demonstrates comprehensive topic coverage — and that’s exactly what topical authority scoring rewards. Manual linking typically captures the obvious connections (linking “self-host n8n” to “scaling n8n queue mode” because both mention n8n). Vector embeddings also surface non-obvious connections — like linking an article about Cloudflare R2 integration to one about self-hosting, because both address infrastructure cost optimization for the same audience.

I built this as an n8n workflow connected to a Pinecone vector database. It processes my full content library in about 3 minutes and generates link suggestions that I’d never find manually. The best SEO automation tools can handle parts of this, but the vector embedding approach finds deeper connections.

Example: Automating 404 Monitoring in n8n

This is the first workflow I’d recommend automating — it’s simple, low-risk, and saves about 90 minutes per week. Here’s my exact setup:

What you need: n8n (free tier works), Google Search Console API access, Screaming Frog or any crawler, Slack (or email for alerts).

Step 1: Schedule a weekly site crawl (Monday 2 AM). Export 404 URLs to CSV. I use Screaming Frog’s CLI triggered by n8n’s Cron node.

Step 2: Pull Google Search Console crawl error data via API. Cross-reference with the crawl export to identify 404s that have backlinks (high priority) or historical traffic (medium priority).

Step 3: Apply priority scoring. High = 5+ backlinks or 100+ monthly sessions. Medium = 1-4 backlinks or 10-99 sessions. Low = no links, no traffic.

Step 4: Auto-send a Slack alert every Monday at 8 AM with high-priority 404s, including URL, backlink count, traffic data, and suggested action (redirect vs. restore vs. leave).

Step 5: I review the alert in 15 minutes, decide on fix strategy for each URL, and implement. Total weekly time: 15 minutes instead of 2+ hours of manual GSC review.

Annual savings: ~78 hours. That’s nearly two full work weeks reclaimed from a single automation.

What Does SEO Automation Actually Cost?

One question nobody talks about honestly: what does this infrastructure actually cost? Here’s my real monthly spend for the full automation stack at NextGrowth.ai:

| Tool/Service | Monthly Cost | What It Handles |

|---|---|---|

| n8n (self-hosted on Coolify) | $0 (runs on existing VPS) | All workflow orchestration |

| Claude API (Anthropic) | ~$15-25 | Content generation, auditing, synthesis |

| DataForSEO API | ~$10-20 | SERP data, keyword research |

| OpenAI Embeddings | ~$2-5 | Vector generation for internal linking |

| Pinecone (free tier) | $0 | Vector storage and similarity queries |

| Screaming Frog | $259/year (~$22/mo) | Site crawling and technical audits |

| Total | $49-72/month |

Compare that to hiring an SEO specialist ($3,000-8,000/month) or outsourcing to an agency ($2,000-10,000/month). The automation approach isn’t just more efficient — it’s dramatically cheaper for solo operators and small teams. The tradeoff is that you need technical ability to set it up and maintain it. If you’re comfortable with n8n or similar tools, the ROI is overwhelming. If you’re not technical, the setup cost in learning time may not justify itself compared to hiring help.

One hidden cost to budget for: debugging time. My n8n workflows break roughly once per month — an API changes its response format, a rate limit gets hit, a node version update introduces incompatibilities. Budget 2-3 hours monthly for workflow maintenance. It’s the “set-and-forget” myth in miniature: even well-built automation needs regular attention.

Automation Maturity Levels: Where Are You?

Not everyone should jump straight to agentic workflows. I think of SEO automation as four maturity levels, and you should progress through them in order:

Level 1: Monitoring automation. Set up rank tracking, broken link alerts, and Core Web Vitals monitoring. If you’re new to n8n as an automation platform, start here. This is pure Green-zone automation with zero quality risk. Takes 2-4 hours to configure. Start here.

Level 2: Reporting automation. Automate your weekly/monthly SEO reports using analytics tools — pull data from GSC, aggregate metrics, send formatted summaries to Slack or email. Saves 3-5 hours per week. I built mine in n8n with a Google Sheets integration that auto-generates executive summaries every Monday.

Level 3: Research automation. Use APIs to automate keyword research, content gap analysis, and competitor monitoring. This is Yellow-zone — the AI does the heavy data processing, but you review and make strategic decisions about what to act on. Saves 5-8 hours per week.

Level 4: Agentic content workflows. Multi-agent pipelines for content briefs, drafts, and quality auditing with human-in-the-loop approval. This is where the real productivity gains live, but also where the quality risks are highest. Don’t attempt Level 4 until you’ve mastered Levels 1-3 and have solid quality criteria defined.

Most teams I’ve talked to in SEO communities are stuck between Level 1 and Level 2. The jump to Level 3 requires API access and some coding ability (or a no-code tool like n8n). Level 4 requires understanding how to define and measure content quality programmatically — which is a non-trivial skill that takes months to develop through iteration.

Will SEO Still Exist in 5 Years?

SEO isn’t dying — it’s shifting from manual execution to strategic oversight. The profession is evolving the same way database administration did: DBAs used to write SQL queries by hand; now they design schemas and optimize performance while automation handles routine operations. SEO professionals are making the same transition.

Google’s spam policies don’t ban AI-assisted content. They penalize low-quality scaled content produced primarily to manipulate rankings. That distinction matters enormously: automation combined with human quality control passes scrutiny; automated bulk publishing without review triggers penalties.

The job market data backs this up. According to Previsible’s 2025 State of SEO Jobs report (10,000+ job listings analyzed), overall SEO role listings declined 34% — but VP-level SEO positions increased 50% and manager-level roles grew 58%. Automation is eliminating repetitive execution tasks while creating demand for strategic leadership.

My prediction for 2027: top-performing SEO teams will operate as lean hybrid units — one strategist setting direction, one automation engineer maintaining AI workflows, and a suite of agents handling execution (monitoring, reporting, initial drafts). This model already exists. I’m essentially running it solo at NextGrowth.ai with n8n as my automation layer.

The critical skill isn’t avoiding automation. It’s knowing which tasks to automate, which to augment, and which to keep fully human. That judgment — the strategic layer — is where the real competitive moat lives. Tools are commodities. Strategy is not.

Frequently Asked Questions

Can I automate SEO?

Yes — about 30% of SEO tasks (rank tracking, technical audits, schema markup, reporting) are fully automatable with tools like n8n, Screaming Frog, and DataForSEO. The remaining 70% — strategy, content quality, digital PR — requires human expertise. The Semrush automation guide confirms that automation handles data-heavy tasks best, freeing strategists for higher-value work.

Which SEO jobs cannot be automated?

Strategy formulation, experience-driven content writing, relationship-based digital PR, crisis management, and YMYL content review all require human judgment. These tasks demand empathy, business context, and authentic communication that AI systems can’t replicate — and Google’s E-E-A-T guidelines explicitly reward human expertise in these areas.

What is the 80/20 rule for SEO?

Automate 80% of your execution workload (data collection, monitoring, reporting) so you can focus your full strategic energy on the 20% that drives competitive advantage — keyword strategy, content differentiation, competitive positioning. In practice, this can free up 20+ hours per week for work that actually moves rankings.

Can SEO be done entirely by AI?

No. AI handles research, data analysis, and initial drafts well, but relying solely on AI produces generic content that lacks brand voice, first-hand experience, and strategic positioning. The Marketing AI Institute’s 2024 report shows 67% of marketers cite training as the top barrier — suggesting the real challenge isn’t the tools, it’s knowing how to use them alongside human judgment.

Is SEO dying because of AI?

No. SEO is evolving from manual task execution to strategic oversight. AI changes how content is produced and how users search, but the need for optimization remains. Google’s 2024-2026 policies don’t ban AI — they penalize low-quality scaled content, making human quality control more important than ever.

Start Automating: Your Next Step

The 30/70 framework isn’t theoretical — I use it daily at NextGrowth.ai, and it’s the reason I can publish thoroughly researched, SEO-optimized content while running every other aspect of the site solo.

Here’s what I’d do this week: Pick one Green-zone task from the safety checklist above — rank tracking or broken link monitoring are the easiest wins. Set it up in n8n or whatever automation platform you prefer. Measure the time you save. Then reinvest that time in the strategic work that actually differentiates your site.

The tools are commodities — anyone can access n8n, Claude, DataForSEO, or Screaming Frog. What you can’t automate is the strategic judgment to use them well: knowing which keywords align with your business model, which content angles differentiate you from competitors, and which quality standards to enforce. That’s the 70% that remains uniquely human — and it’s where the competitive advantage lives.

Automation is the execution layer. Your strategy, experience, and judgment are the competitive moat. Build both.